The Survivor's Bias

We only see the environment in which we survived. Every other planet — where the climate tipped, where life never arose — failed to pass the cognitive bottleneck.

The Classic Formulation

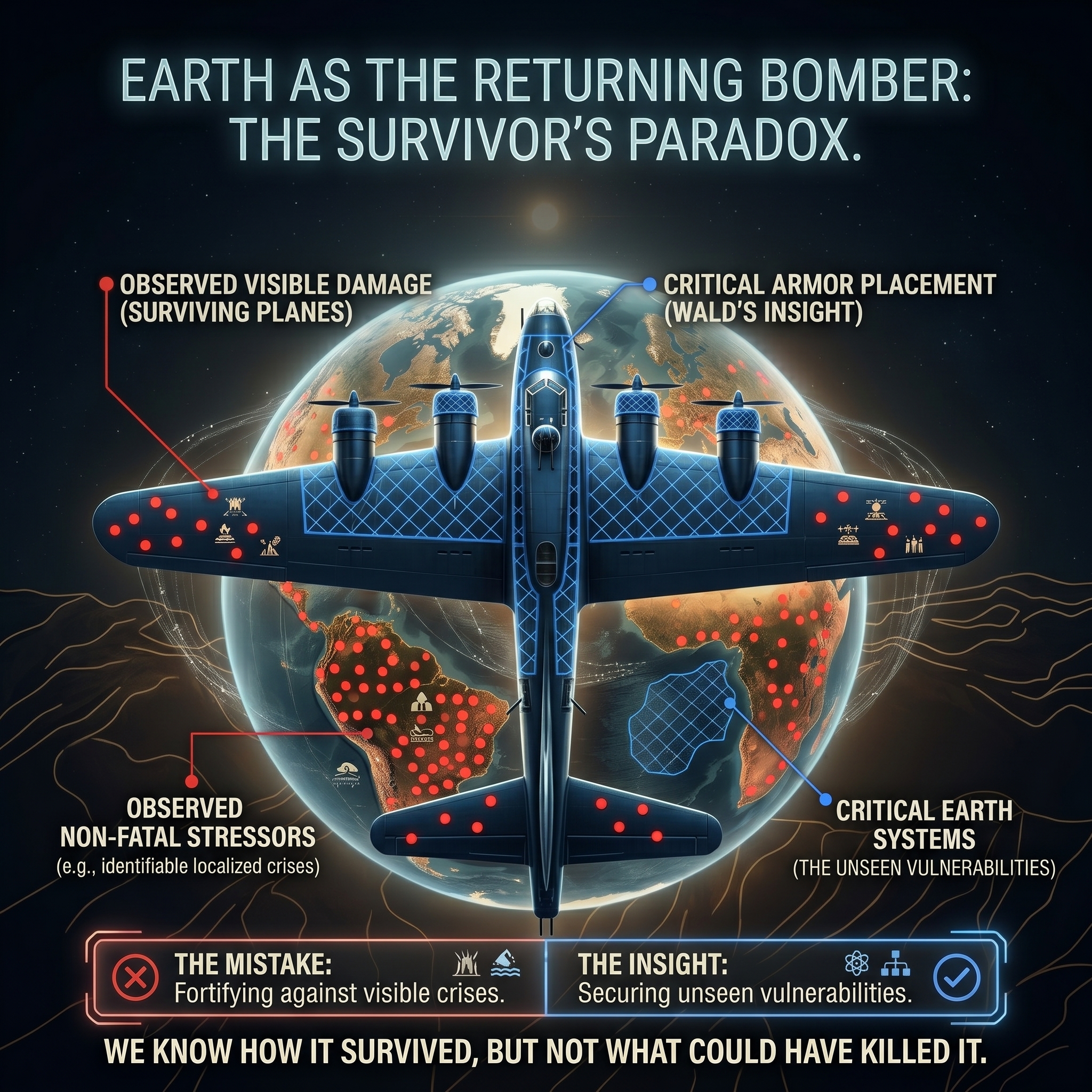

Why we never see the crashed planes

During World War II, the military looked at bombers returning from missions covered in bullet holes. They planned to add armor to the places where the planes were hit most often: the wings and the tail. But statistician Abraham Wald pointed out their fatal flaw. They were only looking at the planes that survived (a logical error now widely known as Survivorship Bias). The planes that were hit in the engine or cockpit didn't come back. The bullet holes they were observing actually showed where a plane could be safely hit and still fly. To increase survival, they needed to armor the places where the returning planes had no holes.

In Wald's story the returning plane is the data you can see. The crashed planes are the data you can't. Applied to astrobiology: we are the returning plane — the rare surviving planetary environment stable enough to produce observers. The "crashed planes" are the billions of unrendered data streams of planets where the climate overheated, froze, or collapsed before complex life could take hold. Those streams never produced anyone to study the climate. We'll never see them.

The mistake is to look at our one returning plane — Earth's Holocene (the unusually stable ~10,000 year epoch we live in) — and conclude that planetary climates are naturally stable. The engineers who saw the holes in the surviving planes almost armored the wrong spots for exactly the same reason: they mistook a filtered, biased sample for representative data. Earth made it back. We have no idea how many other planets didn't.

"The absence of evidence is not evidence of absence — it is evidence of the filter."

Applied to Climate

We are the returning plane. The unrendered streams are the ones we can never see.

We look at 10,000 years of remarkable climatic stability — the Holocene epoch — and we interpret it as proof that Earth's climate is naturally stable. We assume this is the default. We write policy based on returning to this stable baseline. We tell ourselves we just need to stop disrupting a system that would otherwise stay calm.

But the geological record tells a different story. Earth's climate history is one of dramatic, catastrophic instability: ice ages, mass extinctions, runaway greenhouse episodes, ocean circulation collapses. The Holocene — this unusual window of relative stability — is the exception. It is not the rule. It is important to distinguish two kinds of failed timeline. A Hostile Timeline — a frozen Earth, an irradiated wasteland — is physically harsh but still mathematically coherent: ice and fallout obey stable physical laws. A Failed Timeline is something deeper: a collapse where the civilizational structure fractures entirely, where the rate of cascading crises overwhelms our ability to adapt and the shared narrative itself shatters. We fear rapid climate change not merely because it makes the planet hostile, but because cascading complexity can tip a Hostile Timeline into a Failed one — a threshold with no return.

Earth's atmosphere from the ISS. Note the impossibly thin, fragile blue sliver that separates the planetary surface from the vacuum of space—the entire volume of air in which our civilization evolved. Image: NASA / Public Domain

This mathematical fragility is entirely unintuitive. When we look up, the blue sky feels infinite—an endless ocean capable of absorbing any amount of smoke we produce. But viewed from the International Space Station, the truth is laid bare: the breathable atmosphere is a razor-thin, delicate band. If the Earth were the size of an apple, our entire atmosphere would be significantly thinner than its skin.

We can calculate the scale of this illusion. If you took all the breathable air on Earth and divided it equally among every human alive today, your individual share fits into a box just 800 meters on each side. That is your entire lifetime reservoir of the sky. Every time a factory vents, a forest burns, or an engine starts, the smoke is not vanishing into an infinite void—it is filling that 800-meter box. The sky is not boundless; it is a very shallow, tightly budgeted system.

Snapshot Blindness

Human civilisation is 10,000 years old. Earth is 4.5 billion. We are making assumptions about the default state of a system from 0.0002% of its history — a period of unusual stability by the standards of the recent geological past.

The Collapsed Planets

On planets where natural climate perturbations tipped past the point of no return, or where evolutionary bottlenecks weren't passed, there are no observers to report the instability. Those data streams simply never produced a civilisation to measure them.

Self-Fulfilling Safety

The very fact that we are here — thinking, measuring, debating — is conditional on having passed through a benign filter. The filter hides itself. Stability feels normal because it is the only condition in which "normal" can even be felt.

The Ethical Implication

The Corrected Prior

Understanding the bias is not merely an academic exercise. If our moral intuitions about civilizational risk are calibrated on a filtered sample of survivors, those intuitions are systematically too optimistic — we persistently underestimate the probability and magnitude of civilizational collapse. The corrected prior: the structures that sustain us are more fragile than they appear, a single surviving planet is a biased sample, and the absence of visible collapse so far is weak evidence that collapse is unlikely (though our own existence is itself some evidence of achievability).

This is where the intellectual insight becomes an ethical obligation. The Observer does not act from certainty; the Observer acts with a corrected epistemology.

If the military bomber represents our blind assumption of safety, the modern commercial airliner represents our only way forward. Survival is not a passive default; it requires extreme, coordinated, deliberate maintenance against an environment actively trying to kill us.

What this changes

If our intuition about safety comes from a filtered sample of surviving planets, then complacency is not neutral. It is a reasoning error. We are not minor inhabitants of a vast indifferent cosmos. We are the rarest thing in any data stream: the process that makes the cosmos visible at all. But this primacy requires profound humility—we are the center of our own reality, but we are just one tiny algorithmic stabilization in an infinite substrate of mathematically possible patches.

Through the Lens of Ordered Patch Theory

The Stability Filter as a perceptual blindfold

The Ordered Patch Theory offers a formal explanation for why the Survivor's Bias is built into the structure of consciousness itself — not just into statistics.

The theory proposes that your experience of reality is a low-bandwidth informational render — an unimaginably narrow serial bottleneck — that must remain causally consistent to sustain an observer at all. This is the virtual Stability Filter. This boundary condition doesn't just eliminate unstable planets from the cosmological record; it eliminates them from the possibility of being observed.

You cannot observe a chaotic data stream because you would not exist within one. Observation and stability are synonymous in this framework. The Holocene is not evidence that Earth defaults to stability. It is evidence that you made it through a very narrow gate.

"In the OPT, stability is not a gift from physics. It is the precondition for consciousness. And the bias is not a cognitive error — it is a structural feature of what it means to be an observer at all."

| Perspective | View of Climate Stability | Implication |

|---|---|---|

| Mainstream assumption | Default physical state of Earth | Just stop disrupting it and it returns |

| Statistical Survivor's Bias | A lucky Earth, unseen sterile planets | We are extrapolating from filtered data |

| Ordered Patch Theory | A rare informational selection — the only stream we could be in | Stability is a high-effort achievement, not a baseline |

For Scientists

This framework makes empirical conjectures

OPT is a constructive philosophical framework — a rigorous thought experiment rather than an empirically verified physics claim. That said, a framework with no structural consequences is merely poetry. OPT makes three speculative predictions that would, if falsified, require revising the core model:

The Bandwidth Dissolution Test

Integrated Information Theory (IIT) predicts that injecting more information into the conscious workspace should expand experience. OPT predicts the opposite: bypass the brain’s pre-conscious compression filters and inject raw, high-bandwidth data directly into the global workspace, and the result will be sudden phenomenal blanking — not expanded awareness. More uncompressed data crashes the codec.

The High-Integration Noise Test

IIT predicts that any sufficiently integrated recurrent network has rich conscious experience. OPT predicts that integration is necessary but not sufficient: drive a maximally integrated system with pure thermodynamic noise (maximum-entropy input), and it generates zero phenomenality — because there is no compressible grammar for the codec to stabilise around. No structure, no patch.

The Unification Criterion

OPT predicts that a complete, parameter-free Theory of Everything unifying General Relativity and Quantum Mechanics will not be found — not because physics is weak, but because the grammar of the observer cannot fully describe the noise of the substrate beneath it (Mathematical Saturation). A single elegant unification equation would falsify OPT.