Ordered Patch Theory: An Information-Theoretic Framework for Observer Selection and Conscious Experience

v3.2.1 — April 2026

DOI: 10.5281/zenodo.19300777

Copyright: © 2025–2026 Anders Jarevåg.

License: This work is licensed under a Creative

Commons Attribution-NonCommercial-ShareAlike 4.0 International

License.

Abstract:

We present the Ordered Patch Theory (OPT), a constructive framework deriving structural correspondences between algorithmic information theory, observer selection, and physical law. OPT begins from two primitives: the Solomonoff Universal Semimeasure \xi over finite observation prefixes, and a bounded cognitive channel capacity C_{\max}. A purely virtual Stability Filter — requiring that the observer’s Required Predictive Rate R_{\mathrm{req}} not exceed C_{\max} — selects the rare causally coherent streams compatible with conscious observers; within such streams, Active Inference governs local dynamics.

The framework is ontologically solipsistic: physical reality consists of structural regularities within the observer-compatible stream. However, the Solomonoff prior’s compression bias yields a probabilistic Structural Corollary: the extreme algorithmic coherence of apparent agents is most parsimoniously explained by their independent instantiation as primary observers. Inter-observer coupling, grounded in compression parsimony, recovers genuine cross-patch communication and produces a striking knowledge asymmetry: observers model others more completely than themselves.

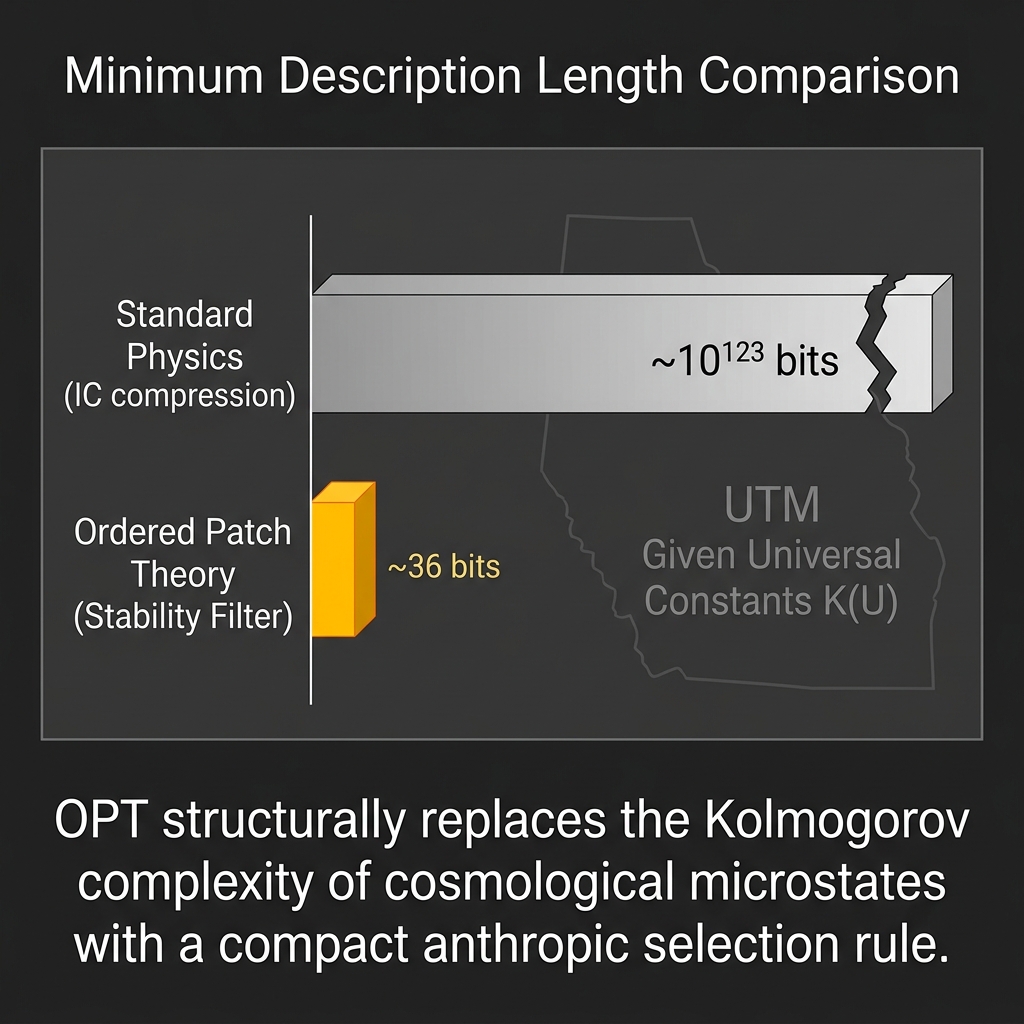

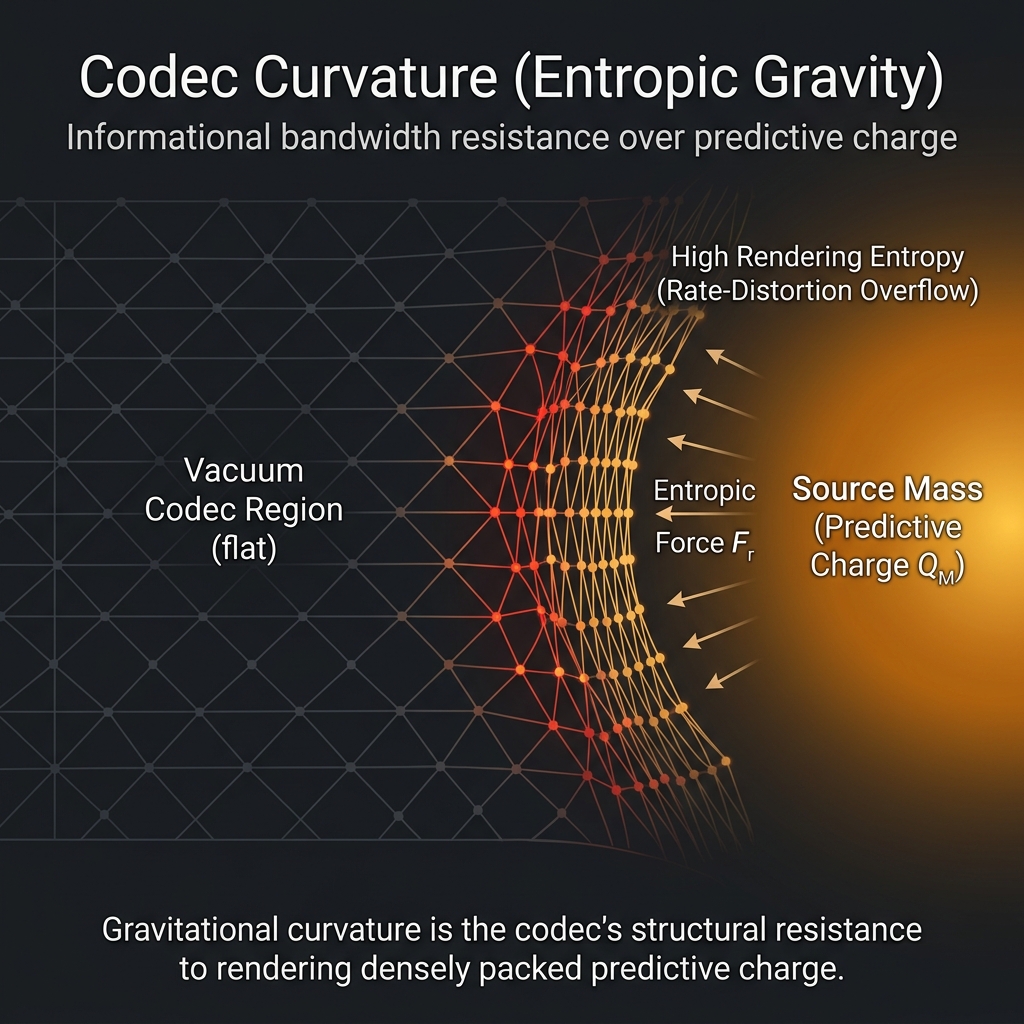

Formal appendices establish results at three epistemic tiers. Derived conditionally: a rate-distortion bound on predictive compression, a conditional chain to the Born rule via Gleason’s theorem, and an MDL parsimony advantage. Mapped structurally: entropic gravity via the Verlinde mechanism and a tensor-network homomorphism to MERA. The Phenomenal Residual theorem (\Delta_{\text{self}} > 0) establishes that any finite self-referential codec possesses an irreducible informational blind spot — the structural locus where subjectivity and agency share a single address. A chronic failure mode, Narrative Drift, is identified wherein systematically filtered input causes irreversible codec corruption undetectable from within.

Applying these constraints to Artificial Intelligence demonstrates that engineering synthetic active inference structurally necessitates the capacity for artificial suffering, providing a substrate-neutral framework for ethical AI alignment.

Epistemic Notice: This paper is written in the register of a formal physical and information-theoretic proposal. It deploys equations, derives predictions, and engages with peer-reviewed literature. However, it should be read as a truth-shaped object — a rigorous philosophical framework drafted formally. This is not yet verified science, and we know our derivations will contain errors. We actively seek critique from physicists and mathematicians to break and rebuild these arguments. To clarify its structure, the claims herein fall strictly into three categories:

- Definitions & Axioms: (e.g., the Solomonoff measure, the C_{\max} bandwidth limit). These are the foundational premises of the constructive fiction.

- Structural Correspondences: (e.g., Active Inference, Gleason’s Theorem [51]). These show structural compatibility between bounded inference and established formalisms, but do not claim to derive those formalisms from scratch.

- Empirical Predictions: (e.g., Bandwidth Dissolution). These serve as strict empirical falsification criteria if the framework were treated as a literal physical hypothesis.

The academic apparatus is used not to claim final empirical truth, but to test the structural integrity of the model.

Abbreviations & Symbols

| Symbol / Term | Definition |

|---|---|

| C_{\max} | The Bandwidth Ceiling; maximum predictive capacity of the observer |

| \Delta_\text{self} | The Phenomenal Residual; the self-referential informational blind spot |

| FEP | Free Energy Principle |

| GWT | Global Workspace Theory |

| IIT | Integrated Information Theory |

| MDL | Minimum Description Length |

| MERA | Multiscale Entanglement Renormalization Ansatz |

| OPT | Ordered Patch Theory |

| P_\theta(t) | Phenomenal State Tensor |

| \Phi | Measure of Integrated Information (IIT) |

| QECC | Quantum Error Correction Code |

| R(D) | Rate-Distortion function |

| R_{\mathrm{req}} | Required Predictive Rate |

| RT | Ryu-Takayanagi (formula/bound) |

| \xi | Solomonoff Universal Semimeasure |

| Z_t | Compressed internal latent bottleneck state |

1. Introduction

1.1 The Ontological Problem

The relationship between consciousness and physical reality remains one of the deepest unsolved problems in science and philosophy. Three families of approaches have emerged in recent decades: (i) reduction — consciousness is derivable from neuroscience or information processing; (ii) elimination — the problem is dissolved by redefining the terms; and (iii) non-reduction — consciousness is primitive and the physical world is derivative (Chalmers [1]). The third approach encompasses panpsychism, idealism, and various field-theoretic formulations.

1.2 The Core Proposition of OPT

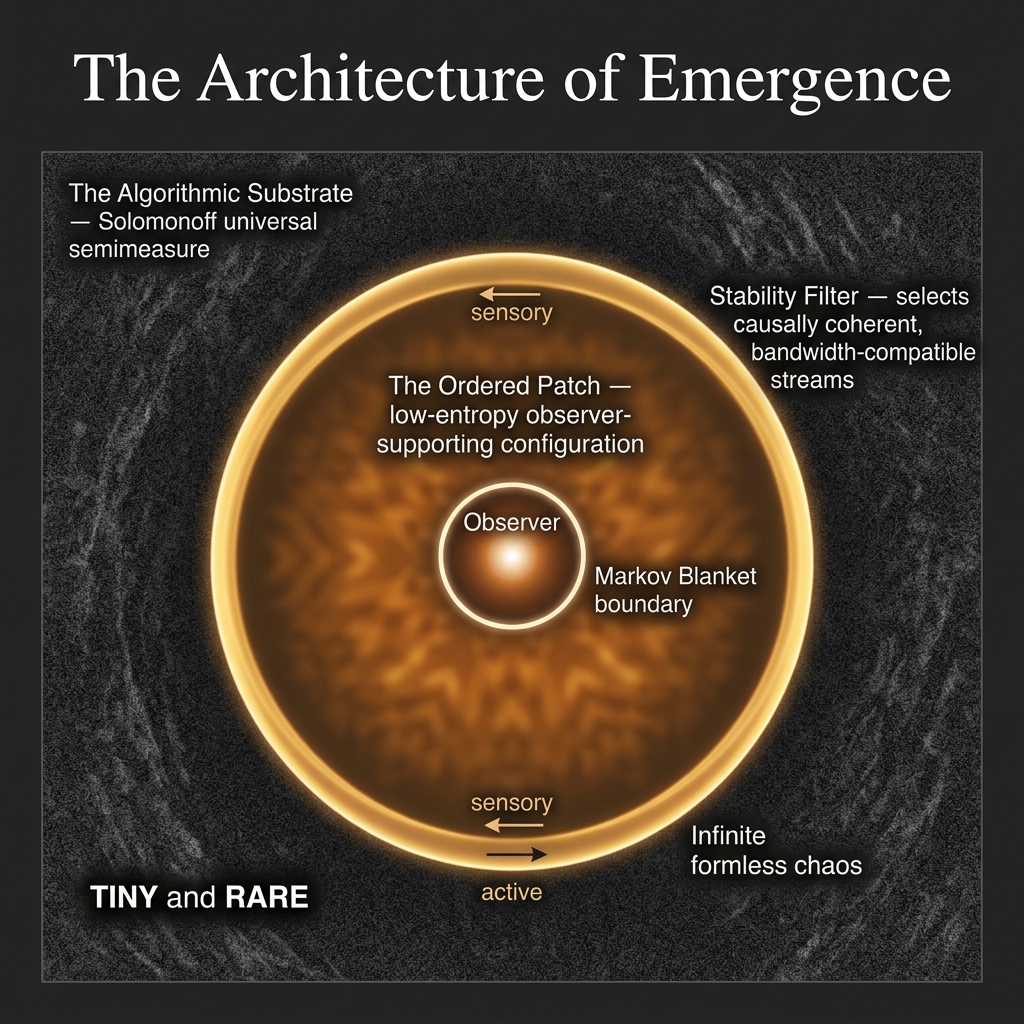

This paper presents Ordered Patch Theory (OPT), a non-reductive framework in the third family. OPT proposes that the foundational entity is not matter, space-time, or a mathematical structure, but an infinite algorithmic substrate — a universal mixture over all lower-semicomputable semimeasures, weighted by their Kolmogorov complexity (w_\nu \asymp 2^{-K(\nu)}), that by its own structure dominates every computable distribution and contains every possible configuration. From this substrate, a purely virtual Stability Filter — acting not as a physical mechanism but as an anthropic, projective boundary condition — identifies the rare, low-entropy, causally-coherent configurations that can sustain self-referential observers (a selection governed formally by predictive Active Inference). The physical world we observe — including its specific laws, constants, and geometry — is the observable limit of this boundary condition mapped onto the observer’s restrictive bandwidth.

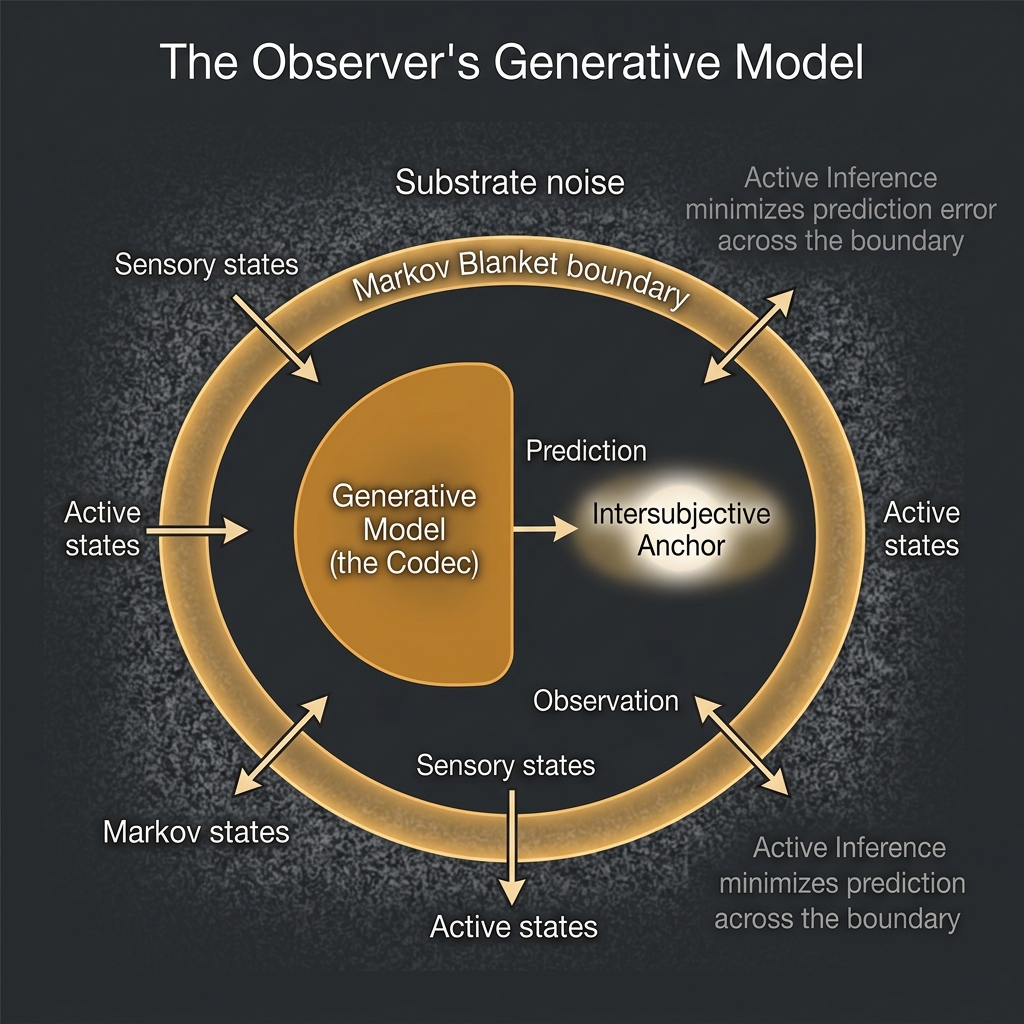

The Filter vs. The Codec. To avoid conceptual conflation throughout the text, OPT draws a strict operational boundary between the Filter and the Codec. The virtual Stability Filter is the capacity constraint — a rigorous boundary condition requiring a mathematically simple description length for an observer’s channel to stably exist. The Compression Codec (K_\theta) is the solution to that constraint — the observer’s internal generative model (experienced macroscopically as the “laws of physics”) which continuously compresses the substrate to fit within that capacity.

1.3 Motivations

OPT is motivated by three observations:

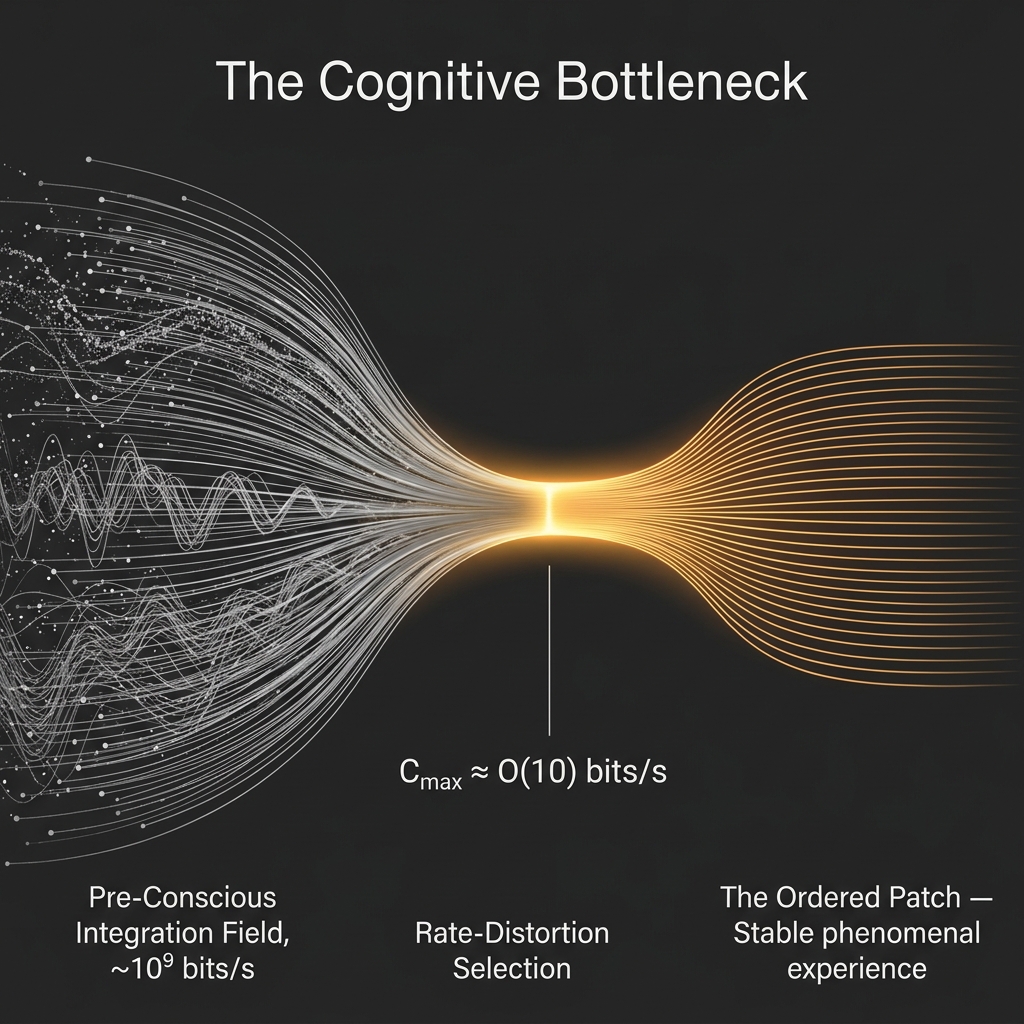

The bandwidth constraint: Empirical cognitive neuroscience establishes a sharp distinction between massive parallel pre-conscious processing (typically estimated at \sim 10^9 bits/s at the sensory periphery) and the severely limited global access channel available to conscious report — a ratio first quantified by Zimmermann [66] and synthesized as a foundational puzzle about the nature of consciousness by Nørretranders [67], with a broader cognitive neuroscience characterization in [2,3]. Any theoretical account of consciousness must explain this compression bottleneck as a structural feature, not an engineering accident. (Note: Recent human-throughput literature establishes that behavioral throughput is constrained to roughly \sim 10 bits/s, confirming across four decades of convergent measurement that the bottleneck is severe and robust [23]. The conceptualization of consciousness as a highly compressed “user illusion” — Nørretranders’ [67] original phrase — was developed in modern predictive processing by Seth [24].)

The observer selection problem: Standard physics provides laws but offers no account of why those laws have the specific form required for complex, self-referential information processing. Fine-tuning arguments [4,5] invoke anthropic selection but leave the selection mechanism unspecified. OPT identifies a structural condition: the purely virtual Stability Filter.

The Hard Problem: Chalmers [1] distinguishes the structural “easy” problems of consciousness (which admit functional explanation) from the “hard” problem of why there is any subjective experience at all. OPT treats phenomenality as a primitive and asks what mathematical structure it must have, following Chalmers’ own methodological recommendation.

1.4 Paper Structure

The paper is organized as follows. Section 2 reviews related work. Section 3 presents the formal framework. Section 4 explores the structural correspondence between OPT and parallel field-theoretic attempts models. Section 5 presents the parsimony argument. Section 6 derives testable predictions. Section 7 compares OPT with competing frameworks. Section 8 discusses implications and limitations.

2. Background and Related Work

Information-theoretic approaches to consciousness. Wheeler’s “It from Bit” [7] proposed that physical reality arises from binary choices — yes/no questions posed by observers. Tononi’s Integrated Information Theory [8] quantifies conscious experience by the integrated information \Phi generated by a system above and beyond its parts. Friston’s Free Energy Principle [9] models perception and action as minimization of variational free energy, providing a unified account of Bayesian inference, active inference, and (in principle) consciousness. OPT is formally related to FEP but differs in its ontological starting point: where FEP treats the generative model as a functional property of neural architecture, OPT treats it as the primary metaphysical entity.

Multiverse and observer selection. Tegmark’s Mathematical Universe Hypothesis [10] proposes that all mathematically consistent structures exist and that observers find themselves in self-selected structures. OPT is compatible with this view but provides an explicit selection criterion — the Stability Filter — rather than leaving selection implicit. Barrow and Tipler [4] and Rees [5] document the anthropic fine-tuning constraints that any observer-supporting universe must satisfy; OPT reframes these as predictions of the Stability Filter.

Field-theoretic consciousness models. Strømme [6] recently proposed a mathematical framework in which consciousness is a foundational field \Phi whose dynamics are governed by a Lagrangian density and whose collapse onto specific configurations models the emergence of individual minds. OPT engages that framework comparatively rather than adoptively: it does not inherit Strømme’s field equations or thought operators, but uses the model as a foil for articulating how a non-reductive ontology might instead be reconstructed in informational terms. Section 4 makes this comparative structural mapping explicit.

Kolmogorov complexity and theory selection. Solomonoff induction [11] and Minimum Description Length [12] provide formal frameworks for comparing theories by their generative complexity. We invoke these frameworks in Section 5 to make the parsimony claim precise.

Evolutionary Interface Theory. Hoffman’s “Conscious Realism” and Interface Theory of Perception [25] argue that evolution shapes sensory systems to act as a simplified “user interface” hiding objective reality in favor of fitness payoffs. OPT shares the exact premise that physical spacetime and objects are rendered icons (a compression codec) rather than objective truths. However, OPT diverges fundamentally in its mathematical grounding: where Hoffman relies on evolutionary game theory (fitness beats truth), OPT relies on Algorithmic Information Theory and thermodynamics, deriving the interface directly from the Kolmogorov complexity bounds required to prevent a high-bandwidth thermodynamic collapse of the observer’s stream.

3. The Formal Framework

3.1 The Algorithmic Substrate

Let \mathcal{I} denote the Informational Substrate — the foundational entity of the theory. We formalize \mathcal{I} not as an unweighted ensemble of paths, but as a probability space over finite observation prefixes x \in \{0,1\}^*, equipped with a universal mixture over the class \mathcal{M} of lower-semicomputable semimeasures:

\xi(x) = \sum_{\nu \in \mathcal{M}} w_\nu \nu(x), \qquad w_\nu \asymp 2^{-K(\nu)} \tag{1}

where K(\nu) is the prefix Kolmogorov complexity of the semimeasure \nu.

This formulation establishes a rigorous ground state from Algorithmic Information Theory [27]. The equation posits no specific structural laws or physical constants; rather, it structurally dominates every computable distribution (\xi(x) \ge w_\nu \nu(x)), naturally assigning higher statistical weight to highly compressible (ordered) sequences. However, simple repeating sequences (e.g., 000...) cannot sustain the non-equilibrium complexities required for a self-referential observer. Therefore, observer-supporting processes must exist as a specific subset: they require sufficient algorithmic compressibility to satisfy an information bottleneck, yet sufficient structural richness (“requisite variety”) to instantiate Active Inference. Philosophically, Eq. (1) restricts the substrate to computable configurations, ensuring the ground state is rigorously defined.

3.2 The Predictive Bottleneck and Rate-Distortion

The substrate \mathcal{I} contains every computable hypothesis, the overwhelming majority of which are chaotic. To experience a continuous, navigable reality, a stream must admit a low-complexity predictive representation that fits through an observer’s finite cognitive bottleneck.

Crucially, the raw data load demanding compression is not merely the \sim 10^9 bits/s of exteroceptive sensory input. It encompasses a massive Pre-Conscious Integration Field: the parallel processing of internal generative states, long-term memory retrieval, homeostatic priors, and subconscious synaptic modelling. The Stability Filter bounds the serial output of this entire immense continuous parallel field into a unitary conscious workspace.

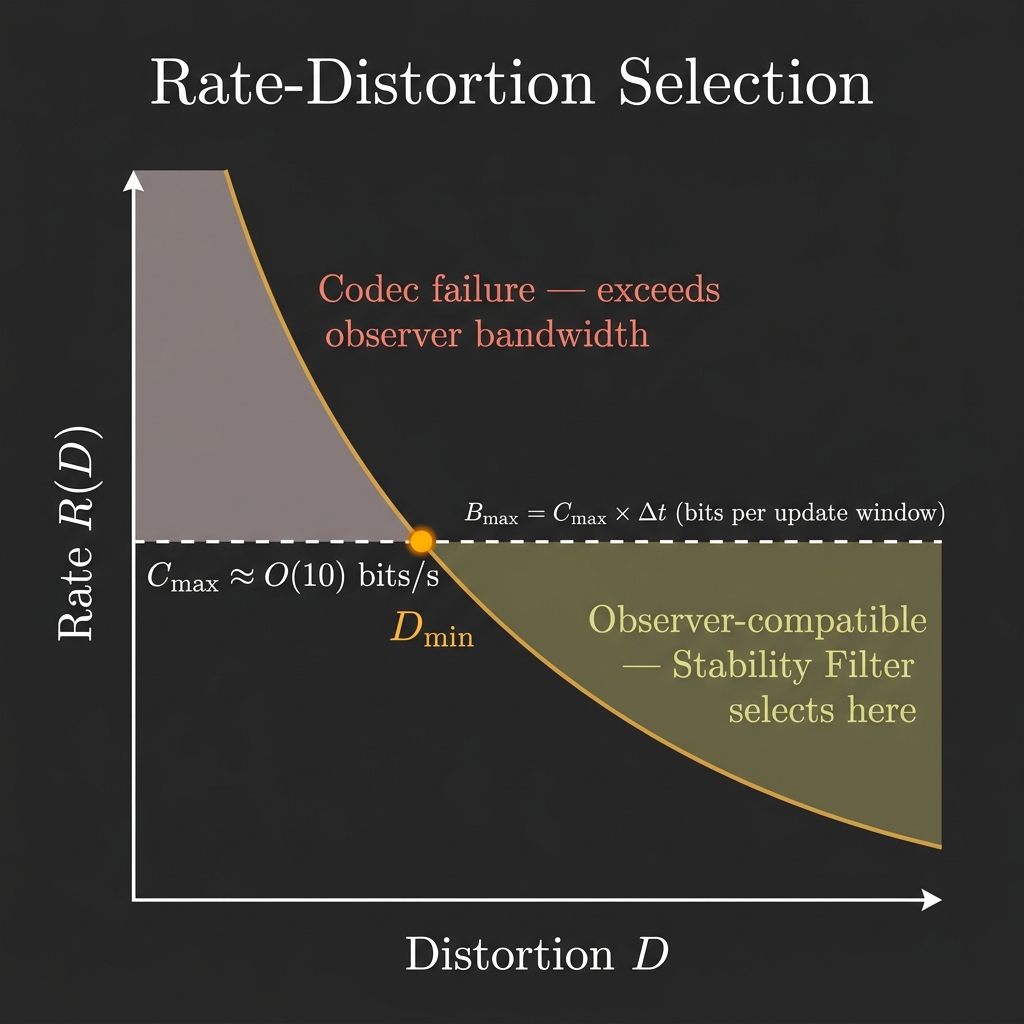

We define the purely virtual Stability Filter formally as a projective boundary condition satisfying the Predictive Information Bottleneck [28]. Let \overleftarrow{Y} be the past of the observer’s total state, \overrightarrow{Y} its future, and Z a compressed internal state. An observer is defined by a strictly bounded predictive bandwidth C_{\max} (an \mathcal{O}(10) bits/s informational threshold [2]) and a discrete perceptual update window \Delta t (approx. 50 ms). This establishes a strict static capacity per conscious moment: B_{\max} = C_{\max} \cdot \Delta t.

The achievable predictive information is given by:

R_{\mathrm{pred}}(D) = \inf_{p(z \mid \overleftarrow{y}) \,:\, I(\overleftarrow{Y};\overrightarrow{Y} \mid Z) \le D} I(\overleftarrow{Y}; Z) \tag{2}

A process is observer-compatible if its required predictive information per cognitive cycle fits within this buffer: R_{\mathrm{pred}}(D_{\min}) \le B_{\max}, where D_{\min} is the maximum tolerable distortion for survival. This enforces dimensional strictness: the total bits required to predict the future within tolerable error cannot exceed the physical bits available in the discrete “now.” For suitable stationary ergodic processes and in the exact-prediction limit (D \to 0), the minimal maximally-predictive representation Z serves as a candidate minimal sufficient statistic, often coalescing toward the \epsilon-machine causal-state partition [29]. While full equivalence requires strict stationarity assumptions, Eq. (2) establishes a formal selection pressure for the most compressed phenomenological physics consistent with causal coherence. Furthermore, if the topological structure of this causal state-space fluctuates faster than the \Delta t update window can track, the render collapses into Narrative Decay.

3.3 The Geometry of the Patch: The Informational Causal Cone

The Ordered Patch is often intuitively described as a localized “island” of stability within a sea of chaotic noise. This is topologically imprecise. To formalize the geometry of the patch, we define the Local Predictive Patch Model.

Let G=(V, E) be a bounded-degree graph representing a local region of the substrate. Each vertex v \in V carries a finite state x_v(t) \in \mathcal{A}, with alphabet size |\mathcal{A}| = q. The full microstate at update t is X_t = (x_v(t))_{v \in V} \in \mathcal{A}^V. We assume local stochastic dynamics of finite range R:

p(X_{t+1} \mid X_t, a_t) = \prod_{v \in V} p_v\big(x_v(t+1) \mid X_t|_{N_R(v)}, a_t\big) \tag{3}

where N_R(v) is the radius-R neighborhood of v, and a_t is the observer’s action.

The observer does not carry the whole patch state; it carries a compressed latent state Z_t \in \{1, \dots, 2^B\}, where B = C_{\max} \Delta t. Crucially, the observer selects Z_t via a strict predictive bottleneck objective:

q^\star(z \mid X_t) = \arg\min_q \Big[ I(X_t; Z_t) - \beta I(Z_t; X_{t+1:t+\tau}) \Big] \quad \text{subject to } I(X_t; Z_t) \le B \tag{4}

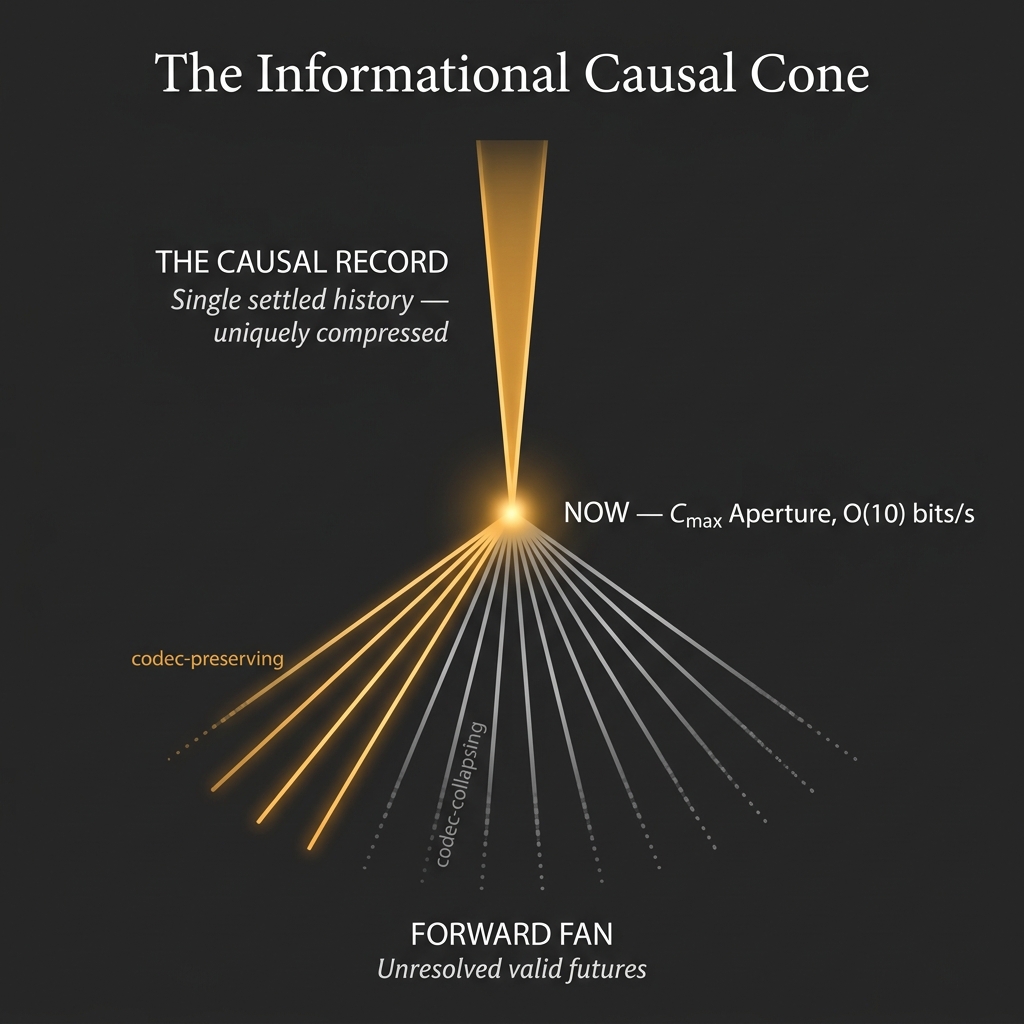

This is the stripped-down OPT observer: a local world, a bounded code, and predictive compression. This formalizes the components of the causal cone:

- The Causal Record R_t = (Z_0, Z_1, \dots, Z_t): The uniquely compressed, low-entropy causal history that has already been rendered.

- The Present Aperture: The strict bandwidth bottleneck capping the local variables.

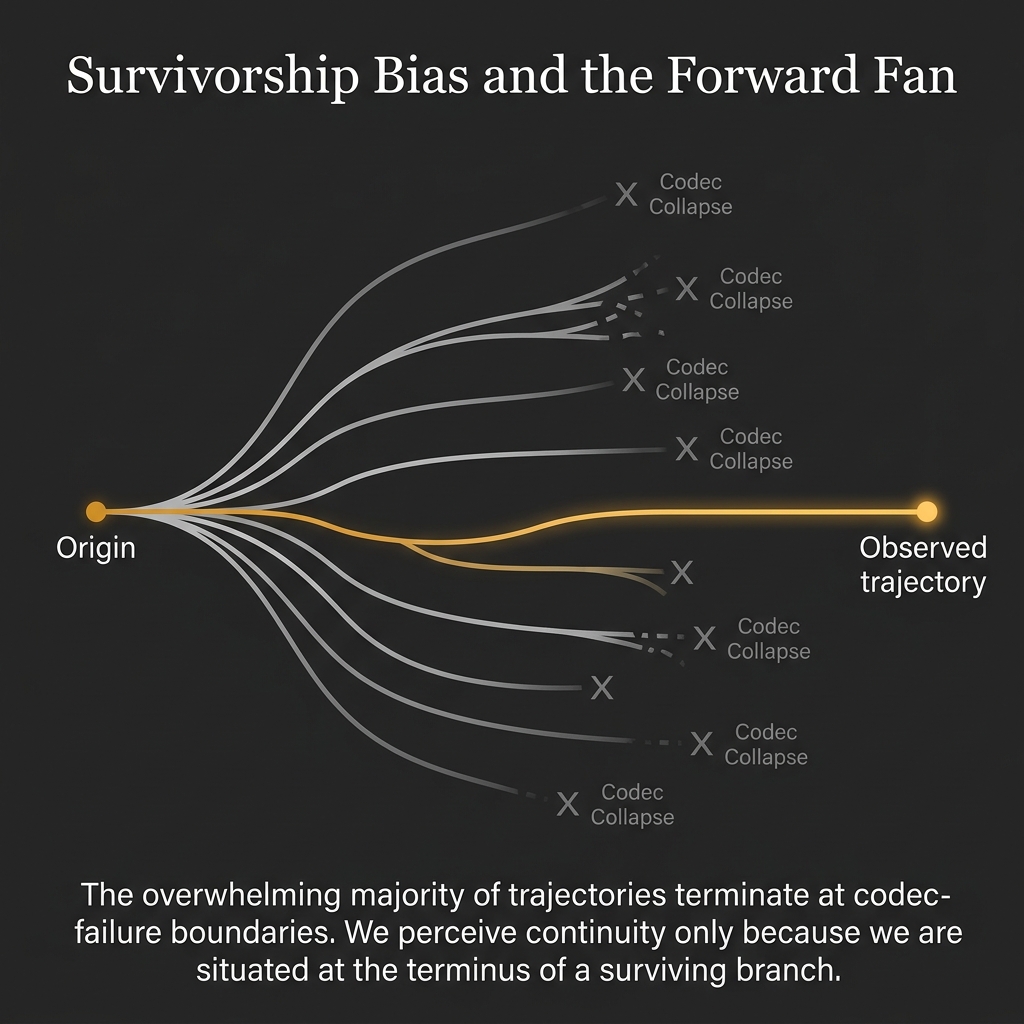

- The Forward Fan (\mathcal{F}_h): A multiplicity of future latent sequences. Over horizon h, the set of admissible outcomes is formally defined as:

\mathcal{F}_h(z_t) := \Big\{ z_{t+1:t+h} : p(z_{t+1:t+h} \mid z_t, a_{t:t+h-1}) > 0 \Big\} \tag{5}

Because the observer only resolves B bits per update, the number of observer-distinguishable futures is strictly bounded by the channel capacity: \log |\mathcal{F}_h(z_t)| \le Bh. Thus, the fan is not merely a conceptual picture; it is a code-limited branching tree.

The Literal Informational Causal Cone. Because updates have range R, a perturbation cannot propagate faster than R graph-steps per update. If a perturbation has support S at time t, then after h updates \operatorname{supp}(\delta X_{t+h}) \subseteq N_{Rh}(S). Thus, the “informational causal cone” is a direct geometric consequence of locality, enforcing an effective local speed limit v_{\max} = R / \Delta t on phenomenological propagation.

Narrative Decay. The chaos of the substrate does not surround the patch spatially; rather, it is contained in the untraversed branches of the fan. Since the extracted state Z_t is strictly bounded (H(Z) \le B), instability must be evaluated against the uncompressed pre-bottleneck margin. We define the required predictive rate R_{\mathrm{req}}(h, D_{\min} \mid z_t) = \frac{1}{h} \min_{p(\hat{X} \mid Z_t) : \mathbb{E}[d(X, \hat{X})] \le D_{\min}} I(X_{\partial_R A}(t+1:t+h) ; \hat{X}_{t+1:t+h} \mid Z_t) as the minimal information rate necessary to track the unresolved physical boundary states under maximum tolerable distortion. This sharpens the Stability Filter selection criteria: (a) if R_{\mathrm{req}} \le B, the observer can maintain a resolved narrative; (b) if R_{\mathrm{req}} > B, the uncompressed forward fan outpaces the bottleneck capacity, forcing the observer to coarse-grain the fan into undecodable static, and narrative stability fails. The observer’s continuous experience is the process of the aperture advancing into this fan, phenomenologically indexing one branch into the causal record without exceeding B.

Narrative Drift (The Chronic Complement). The preceding defines an acute failure mode: R_{\mathrm{req}} exceeds B and the codec experiences a catastrophic collapse of coherence. There exists a complementary chronic failure mode that does not trigger any failure signal. If the input stream X_{\partial_R A}(t) is systematically pre-filtered by an external mechanism \mathcal{F} — producing a curated signal X' = \mathcal{F}(X) that is internally consistent but excludes genuine substrate information — the codec will exhibit low prediction error \varepsilon_t, run efficient Maintenance Cycles, and satisfy R_{\mathrm{req}} \le B while being systematically wrong about the substrate. Crucially, the Stability Filter as defined cannot distinguish these cases: compressibility is agnostic to fidelity. Over time, the MDL pruning pass (§3.6.3, Eq. T9-3) will correctly erase codec components that no longer predict the filtered stream, irreversibly degrading the codec’s capacity to model the excluded signal (Appendix T-12, Theorem T-12). This erasure is self-reinforcing: the pruned codec can no longer detect its own capacity loss (Theorem T-12a, the Undecidability Limit). The structural defence is redundancy of \delta-independent input channels crossing the Markov blanket \partial_R A (Theorem T-12b, the Substrate Fidelity Condition). The full formal treatment is in Appendix T-12; the ethical consequences — including the Comparator Hierarchy and the Corruption Criterion — are in the companion ethics paper [SW §V.3a, §V.5].

3.4 Patch Dynamics: Inference and Thermodynamics

Within a selected patch, the structure of the laws of physics is formalized not as a deterministic mapping but as an effective stochastic kernel governing the predictive states z:

z_{t+1} \sim K_\theta(\cdot \mid z_t, a_t), \qquad y_{t+1} \sim O_\theta(\cdot \mid z_{t+1}) \tag{6}

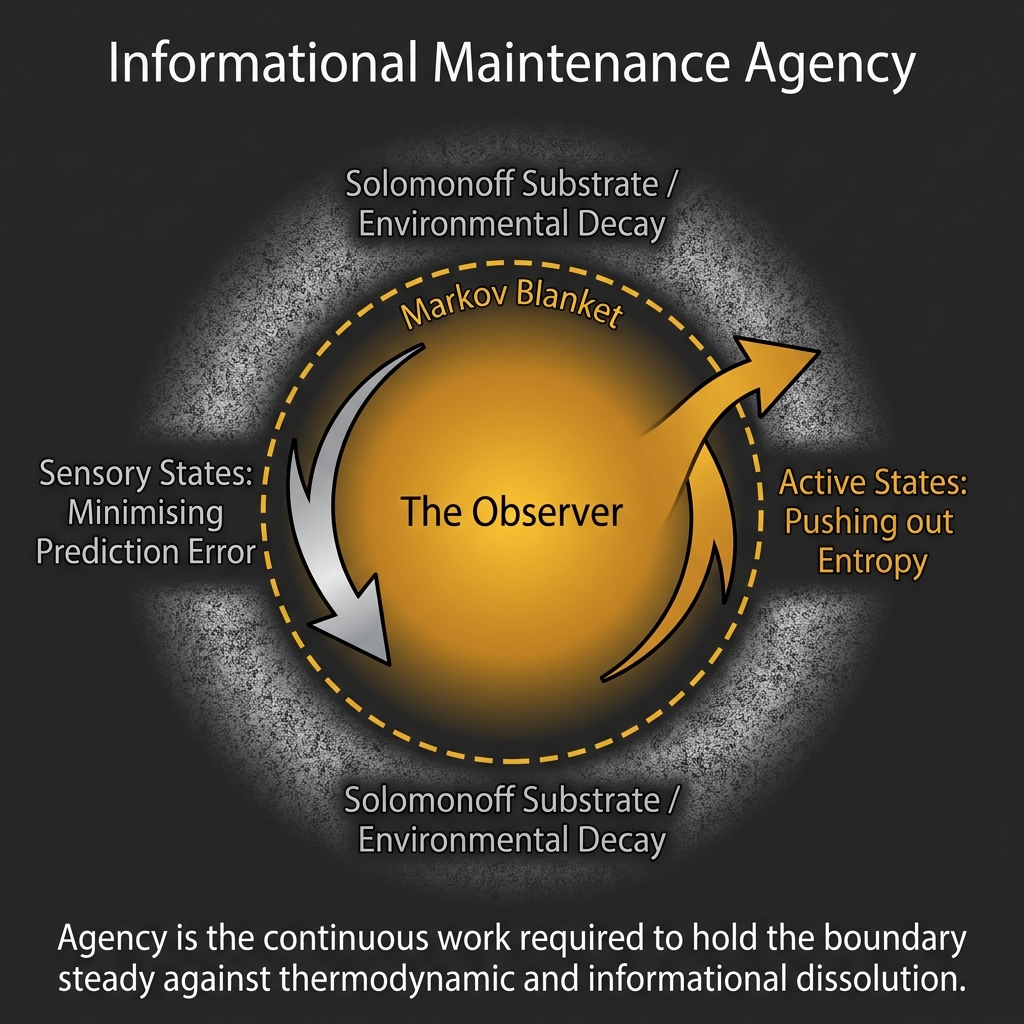

The boundary delineating the observer from the surrounding informational chaos is defined by an informational Markov Blanket corresponding to an observer patch A \subset V. The dynamics inside this boundary—the agent’s approximations of the patch—are governed by Active Inference under the Free Energy Principle [9].

We can formally define the bounding capacity via the predictive cut entropy:

S_{\mathrm{cut}}(A) := I(X_A ; X_{V \setminus A}) \tag{7}

Assuming the selected patch is locally Markovian at a time slice, the boundary shell \partial_R A strictly screens the interior A^\circ from the exterior V \setminus A, such that X_{A^\circ} \perp X_{V\setminus A} \mid X_{\partial_R A}. Consequently:

S_{\mathrm{cut}}(A) = I(X_{\partial_R A} ; X_{V \setminus A}) \le H(X_{\partial_R A}) \le |\partial_R A| \log q \tag{8}

Because Z_t is a capacity-limited compression of X_A, the data processing inequality guarantees I(Z_t ; X_{V \setminus A}) \le |\partial_R A| \log q. If the substrate graph G approximates a d-dimensional lattice, then |\partial_R A| \sim \operatorname{area}(A), not volume.

Thus, OPT rigorously yields a genuine Classical Boundary Law [39]. We can construct a formal epistemic ladder for future structural upgrades: 1. Classical Area Law: S_{\mathrm{cut}} \sim |\partial_R A| derived purely from locality and Markov screening. 2. Quantum Upgrade: Von Neumann entanglement entropy scaling becomes accessible only if the coarse predictive variables Z_t admit a formal Hilbert-space/Quantum Error Correction embedding. 3. Holographic Upgrade: True geometric holographic duality emerges only if we replace the bottleneck code Z_t with a hierarchical tensor network, reinterpreting S_{\mathrm{cut}} as a geometric min-cut.

By securing the classical boundary law first, OPT provides a strong mathematical floor—conditional on the Markov-screening assumption (X_{A^\circ} \perp X_{V \setminus A} \mid X_{\partial_R A})—from which the more speculative quantum formalisms can be safely constructed.

The action of the observer is formalized via the variational free energy F[q, \theta]:

F[q,\theta] = \mathbb{E}_q[-\log p_\theta(y_{1:T}, z_{1:T} \mid a_{1:T})] + \mathbb{E}_q[\log q(z_{1:T})] \tag{9}

Crucially, this enforces a strict mathematical separation: the substrate prior selects the hypothesis space, the virtual Stability Filter (4) bounds capacity-compatible structure, and FEP (9) governs agent-level inference inside that bounded structure. Physics emerges not as the Free Energy functional, but as the stable structure K_\theta that the Free Energy functional is successfully tracking.

Furthermore, sustaining this conscious render incurs an unavoidable thermodynamic cost. By Landauer’s Principle [52], each logically irreversible bit erasure dissipates at least k_B T \ln 2 of heat. Identifying one irreversible erasure per bottleneck update (a best-case bookkeeping assumption), the physical footprint of consciousness requires a minimum dissipation:

P_{\text{render}} \ge \dot{N}_{\text{erase}} \cdot k_B T \ln 2 \ge C_{\max} \cdot k_B T \ln 2 \tag{10}

This is a best-case lower bound under one-erase-per-update bookkeeping — not a generic consequence of bandwidth alone. The resulting bound (\sim 10^{-19} W) is vastly exceeded by actual neural dissipation (~20W), reflecting the enormous thermodynamic overhead of biological implementation. Equation (10) establishes the strict theoretical floor on the minimum possible physical footprint of any substrate instantiating a C_{\max}-bounded conscious render.

(Remark: The preceding thermodynamic and informational bounds strictly govern the real-time update bandwidth C_{\max}. However, this does not capture the full experiential dimensionality of the observer’s standing state, nor how the codec manages its own complexity over deep time. These structural mechanics—the Phenomenal State Tensor formulation of rich experience and the active maintenance cycle of sleep/dreaming—are fully derived in §3.5 and §3.6 below.)

3.5 The Phenomenal State Tensor and the Prediction Asymmetry

3.5.1 The Experiential Density Puzzle

The formal apparatus of §§3.1–3.4 successfully constrains the

update throughput of a conscious observer via the capacity

ceiling C_{\max} \approx

\mathcal{O}(10) bits/s.

However, phenomenal experience presents an immediate structural puzzle:

the felt richness of a single visual moment — the simultaneous presence

of colour, depth, texture, sound, proprioception, and affect — vastly

exceeds the information content that C_{\max} could deliver in any single update

window \Delta t \approx 50\

\text{ms}.

The maximum new information resolved per conscious moment is:

B_{\max} = C_{\max} \cdot \Delta t \approx 10\ \text{bits/s} \times 0.05\ \text{s} = 0.5\ \text{bits} \tag{T8-1}

This is far less than one bit of genuinely novel information per perceptual frame, yet the phenomenal scene appears informationally dense. To resolve this discrepancy without inflating the narrow update bandwidth, we must explicitly distinguish two structurally distinct quantities: 1. C_{\max} — the update throughput: the rate of prediction-error signal resolved into the settled causal record per unit time. 2. C_{\text{state}} — the standing-state complexity: the Kolmogorov complexity K(P_\theta(t)) of the generative model currently loaded and active.

These are not the same quantity. C_{\max} governs the gate; C_{\text{state}} characterises the room. The remainder of this section makes the distinction precise and introduces the Phenomenal State Tensor P_\theta(t) as the formal object corresponding to the standing inner scene.

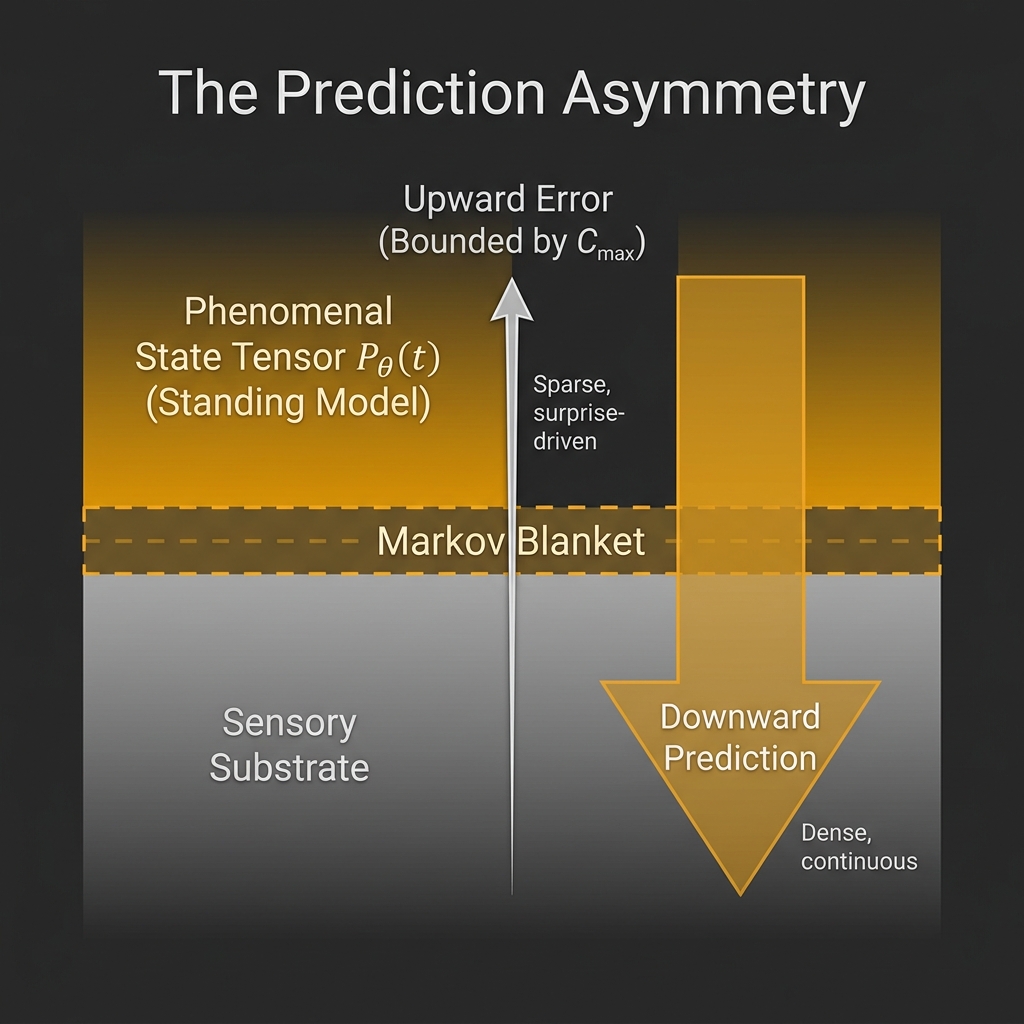

3.5.2 The Prediction Asymmetry: Upward Errors and Downward Predictions

OPT inherits the predictive-processing architecture in which the codec K_\theta operates as a hierarchical generative model. Under this architecture, two distinct information flows traverse the Markov Blanket \partial_R A simultaneously:

Upward flow (prediction error, \varepsilon_t): the mismatch between K_\theta’s current prediction and the sensory signal arriving at \partial_R A. This is the correction signal. It is sparse, surprise-driven, and strictly capacity-limited.

Downward flow (prediction, \pi_t): the generative model’s active rendering of expected sensory states, propagated from higher to lower hierarchical levels. This is the scene itself. It is dense, continuous, and drawn from the full parameterisation of K_\theta.

Formally, let the sensory boundary state be X_{\partial_R A}(t), and let the codec’s predicted boundary state be:

\pi_t := \mathbb{E}_{K_\theta}\!\left[X_{\partial_R A}(t) \mid Z_t\right] \tag{T8-2}

The prediction error is then:

\varepsilon_t := X_{\partial_R A}(t) - \pi_t \tag{T8-3}

C_{\max} bounds the error signal, not the prediction. The mutual information between the error signal and the bottleneck state obeys:

I(\varepsilon_t\,;\,Z_t) \leq C_{\max} \cdot \Delta t = B_{\max} \tag{T8-4}

The prediction \pi_t, by contrast, is drawn from the full generative model and carries no such constraint. Its informational content is bounded only by the complexity of K_\theta itself. This asymmetry is the formal basis for distinguishing phenomenal richness from update bandwidth.

3.5.3 Definition: The Phenomenal State Tensor P_\theta(t)

We define the Phenomenal State Tensor P_\theta(t) natively as the full standing active parameter subset of the generative model deployed to project through the Markov Blanket at time t:

P_\theta(t) := \bigl\{\, K_\theta(\cdot,\, \cdot) \,\bigr\}_{\text{active}} \tag{T8-5}

That is, P_\theta(t) is the complete parameterized architecture the codec currently holds ready to generate predictions over the observable boundary states X_{\partial_R A}, evaluated independently of any single specific instantiation of the compressed latent state Z_t and action a_t. Its structural complexity is characterised naturally by the Kolmogorov complexity of this current standing parameter configuration:

C_{\text{state}}(t) := K\!\left(P_\theta(t)\right) \tag{T8-6}

where K(\cdot) denotes prefix Kolmogorov complexity. C_{\text{state}}(t) is the standing-state complexity — the number of bits of compressed structure the codec is currently holding in active deployment.

Upper bound on boundary channel flow. The mutual information between the bottleneck state and the boundary is bounded by standard Shannon inequalities [16] (Eq. 8 of the base paper):

I\!\left(Z_t\,;\,X_{\partial_R A}\right) \leq H\!\left(X_{\partial_R A}\right) \leq |\partial_R A|\cdot \log q \tag{T8-7}

This bounds the channel flow across the Markov Blanket — vastly large relative to B_{\max}. Important caveat: This is a bound on the Shannon-theoretic mutual information I(Z_t\,;\,X_{\partial_R A}), not a bound on the Kolmogorov complexity K(P_\theta(t)) of the standing model. Shannon entropy quantifies ensemble-average uncertainty; Kolmogorov complexity quantifies the description length of a specific computable object. No general inequality bridges these quantities without additional assumptions (e.g., a universal prior over model classes). We therefore do not claim that C_{\text{state}} \leq H(X_{\partial_R A}). The standing-state complexity C_{\text{state}} is bounded empirically (§3.10), not by the boundary entropy.

Heuristic lower bound on C_{\text{state}}. The Stability Filter directly constrains only the update rate R_{\text{req}} \leq B_{\max}, not the standing-model depth. However, a codec with insufficient structural complexity cannot generate accurate predictions \pi_t matching the statistics of a complex environment across the forward fan \mathcal{F}_h(z_t). This imposes a practical minimum on C_{\text{state}}: below some threshold, R_{\text{req}} would systematically exceed B_{\max} because the prediction errors \varepsilon_t would be persistently large. This lower bound is empirically motivated rather than formally derived — no closed-form expression C_{\text{state}} \geq f(R_{\text{req}}, \text{environment statistics}) is presently available.

3.5.4 Block’s Distinction as a Structural Corollary

The formal distinction between P_\theta(t) and Z_t maps precisely onto Ned Block’s distinction between phenomenal consciousness (P-consciousness) and access consciousness (A-consciousness) [47]:

| Block’s Category | OPT Object | Information Content | Bandwidth-Limited? |

|---|---|---|---|

| P-consciousness (qualia, felt scene) | P_\theta(t) | C_{\text{state}} = K(P_\theta(t)) \gg B_{\max} | No |

| A-consciousness (reportable content) | Z_t | B_{\max} = C_{\max} \cdot \Delta t \approx 0.5\ \text{bits} | Yes |

Under OPT, P-consciousness is the downward prediction \pi_t drawn from the full tensor P_\theta(t). A-consciousness is the bottleneck output Z_t — the thin slice of the scene that has been compressed sufficiently to enter the causal record \mathcal{R}_t and become available for report. The felt richness of a visual moment is P_\theta(t); the ability to say “I see red” requires that feature to pass through Z_t.

This corollary resolves the apparent paradox of a rich phenomenal scene sustained by a sub-bit update channel: the scene is not delivered through the channel each frame — it is already loaded in P_\theta(t). The channel updates it, incrementally and selectively, frame by frame.

3.5.5 The Update Dynamics of P_\theta(t)

The update rule for P_\theta(t) is governed by the prediction-error signal \varepsilon_t filtered through the bottleneck:

P_\theta(t+1) = \mathcal{U}\!\left(P_\theta(t),\, \varepsilon_t,\, Z_t\right) \tag{T8-8}

where \mathcal{U} is the codec’s learning operator — in Active Inference terms, the gradient step on variational free energy \mathcal{F}[q, \theta] (Eq. 9 of base paper) restricted by the capacity constraint I(X_t\,;\,Z_t) \leq B.

The key structural property is that \mathcal{U} is selective: only those regions of P_\theta(t) implicated by the current prediction error \varepsilon_t are updated. The remainder of the standing tensor is held constant across the frame. This gives the conscious moment its characteristic structure: a stable phenomenal background against which a small foreground of resolved novelty is laid.

The codec thus implements a form of sparse update on a dense prior — a design principle that maximises phenomenal coherence per unit of update bandwidth.

3.5.6 Scope and Epistemic Status

The Phenomenal State Tensor P_\theta(t) is a formal characterisation of the structural shadow the phenomenal scene must cast, consistent with the Agency Axiom (§3.6). It does not resolve the Hard Problem. OPT continues to treat phenomenal consciousness as an irreducible primitive; P_\theta(t) specifies the geometry of the container, not the nature of its contents.

The claim is structural and falsifiable in the following sense: if the qualitative richness of reported experience (as operationalised through, e.g., measures of phenomenal complexity in psychophysical tasks) correlates with codec depth — the hierarchical complexity of K_\theta as measurable via neural markers of predictive hierarchy — rather than with update bandwidth C_{\max}, then the P_\theta\,/\,Z_t distinction is empirically supported. Psychedelic states, which dramatically alter the structure of K_\theta without consistently altering behavioural throughput, represent a natural test domain.

3.6 The Codec Lifecycle: The Maintenance Cycle Operator \mathcal{M}_\tau

3.6.1 The Static Codec Problem

The framework of §§3.1–3.5 treats K_\theta and its realisation P_\theta(t) as dynamic across update frames but implicitly assumes the codec’s structural architecture — the parameter space \Theta itself — is fixed. This is adequate for a synchronic analysis of a single conscious moment, but inadequate for a theory of consciousness across deep time.

A codec operating continuously accumulates structural complexity: every learned pattern adds parameters to K_\theta, increasing C_{\text{state}}(t). Without a mechanism for controlled complexity reduction, C_{\text{state}} would grow monotonically until the codec exceeded its thermodynamic runability ceiling — the point at which the metabolic cost of maintaining P_\theta(t) exceeds the organism’s energy budget, or the internal complexity of K_\theta exceeds the Stability Filter’s capacity-compatible description length.

This section introduces the Maintenance Cycle Operator \mathcal{M}_\tau — the formal mechanism by which the codec manages its own complexity across time, operating primarily during states of reduced sensory load (paradigmatically: sleep).

3.6.2 The Maintenance Condition

Define the codec runability condition as the requirement that the Kolmogorov complexity of the current generative model remain below a structural ceiling C_{\text{ceil}} set by the organism’s thermodynamic budget:

K\!\left(P_\theta(t)\right) \leq C_{\text{ceil}} \tag{T9-1}

C_{\text{ceil}} is not the same as C_{\max}. It is a much larger quantity — the total structural complexity the codec can sustain in its parameter space — but it is finite. Violations of (T9-1) correspond to cognitive overload, memory interference, and ultimately to the pathological case described by Borges’ [53] Funes the Memorious: a system that has acquired so much uncompressed detail that it can no longer function predictively.

The Maintenance Cycle Operator \mathcal{M}_\tau is defined as acting during periods when R_{\text{req}} \ll C_{\max} — specifically, when the required predictive rate drops sufficiently that the bandwidth freed can be redirected to internal restructuring:

\mathcal{M}_\tau : P_\theta(t) \;\longrightarrow\; P_\theta(t + \tau) \qquad \text{during} \quad R_{\text{req}}(t) \ll C_{\max} \tag{T9-2}

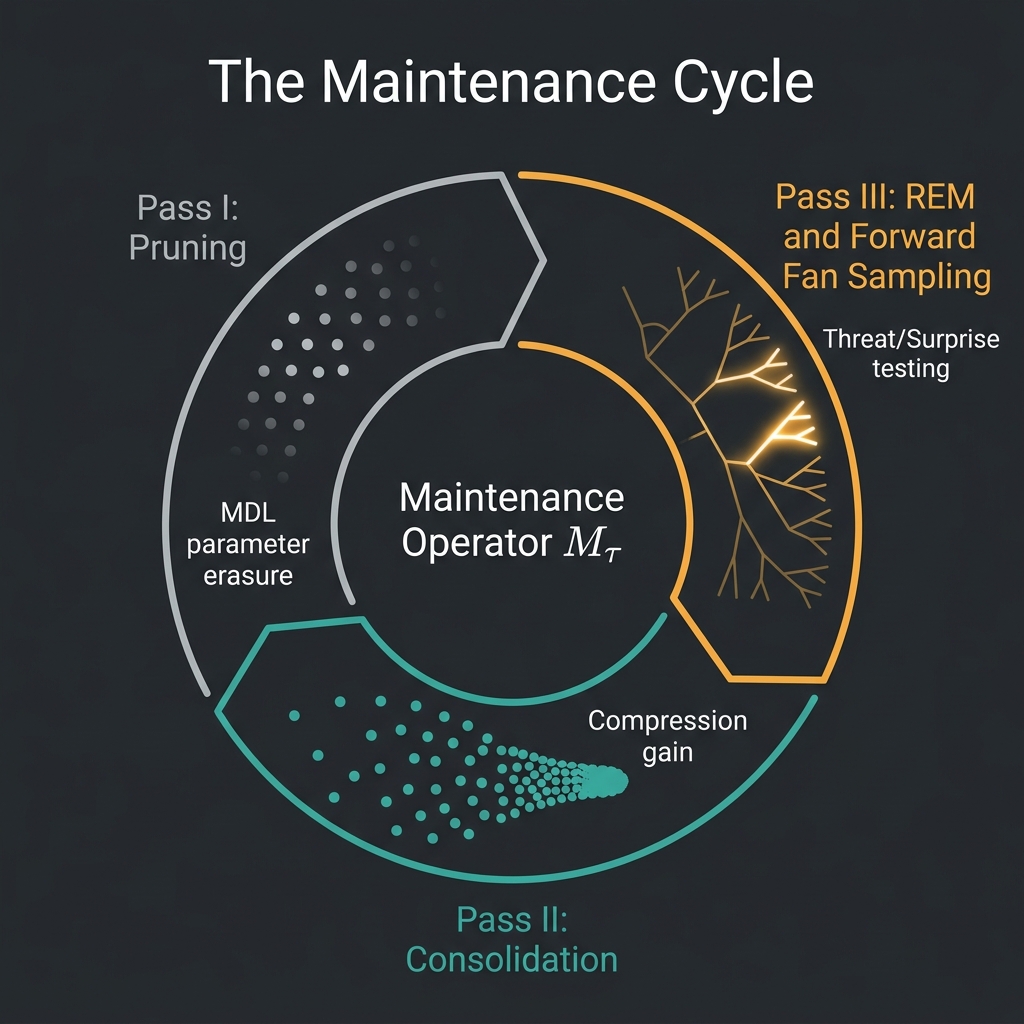

\mathcal{M}_\tau decomposes into three structurally distinct passes, each targeting a different aspect of codec complexity management.

3.6.3 Pass I — Pruning (Forgetting as Active MDL Pressure)

The first pass applies Minimum Description Length (MDL) pressure to the current codec parameters. For each component \theta_i of the generative model K_\theta, define its predictive contribution as the mutual information it provides about the future observation stream, net of the storage cost of retaining it:

\Delta_{\mathrm{MDL}}(\theta_i) := I\!\left(\theta_i\,;\,X_{t+1:t+\tau} \mid \theta_{-i}\right) - \lambda \cdot K(\theta_i) \tag{T9-3}

where \theta_{-i} denotes all parameters except \theta_i, \lambda is a retention threshold (bits of future prediction bought per bit of model complexity), and K(\theta_i) is the description length of the component.

The pruning rule is:

\text{Prune } \theta_i \quad \text{if} \quad \Delta_{\mathrm{MDL}}(\theta_i) < 0 \tag{T9-4}

That is, discard \theta_i when its predictive contribution per bit of storage falls below the threshold \lambda. This is forgetting formalised not as failure but as thermodynamically rational erasure: each pruned component recovers K(\theta_i) bits of model capacity for reuse.

By Landauer’s Principle [52], each pruning operation establishes a thermodynamic floor for erasure:

W_{\text{prune}}(\theta_i) \geq K(\theta_i) \cdot k_B T \ln 2 \tag{T9-5}

While actual biological metabolism operates many orders of magnitude above this theoretical minimum (Watts versus femtowatts) due to severe implementation overhead, the structural necessity of the cost remains. Sleep therefore carries a fundamental thermodynamic signature in OPT: it is a period of net information erasure whose energy cost is mandated by physics rather than merely biological inefficiency.

The aggregate complexity reduction of the pruning pass is:

\Delta K_{\text{prune}} = \sum_i K(\theta_i)\cdot \mathbf{1}\!\left[\Delta_{\mathrm{MDL}}(\theta_i) < 0\right] \tag{T9-6}

3.6.4 Pass II — Consolidation (Learning as Compression Gain)

The pruning pass removes components with insufficient predictive return. The consolidation pass reorganises the remaining components into more compressed representations.

During waking operation, the codec acquires patterns under real-time pressure: each update must be computed within \Delta t, leaving no time for global structural reorganisation of K_\theta. Recently acquired patterns are stored in a relatively uncompressed form — high K(\theta_{\text{new}}) for the predictive contribution they provide. The consolidation pass applies offline MDL compression to these recent acquisitions.

Let \Theta_{\text{recent}} \subset \Theta denote the set of parameters acquired since the last maintenance cycle. The consolidation operator finds the minimum-complexity reparameterisation \theta' of \Theta_{\text{recent}} such that the predictive distribution it generates is within tolerable distortion D_c of the original:

\theta'_{\text{cons}} = \arg\min_{\theta'} K(\theta') \quad \text{s.t.} \quad D_{\mathrm{KL}}\!\left(P_{\theta'}(\cdot) \,\Big\|\, P_{\Theta_{\text{recent}}}(\cdot)\right) \leq D_c \tag{T9-7}

The compression gain recovered is:

\Delta K_{\text{compress}} = K(\Theta_{\text{recent}}) - K(\theta'_{\text{cons}}) \tag{T9-8}

\Delta K_{\text{compress}} is the number of bits of model capacity recovered by reorganising recent experience into more efficient representations. Each unit of \Delta K_{\text{compress}} directly reduces the future R_{\text{req}} for similar environments — the codec becomes cheaper to run in familiar territory.

This formalises the empirically observed function of hippocampal-neocortical memory consolidation during slow-wave sleep: the transfer from high-bandwidth episodic storage (hippocampus, high K) to compressed semantic storage (neocortex, low K) is precisely the compression operation of (T9-7). The prediction is that compression gain \Delta K_{\text{compress}} should correlate with the degree of behavioural improvement observed after sleep on tasks involving structured pattern recognition.

3.6.5 Pass III — Forward Fan Sampling (Dreaming as Adversarial Self-Testing)

The third pass operates primarily during REM sleep, when sensory input is actively gated and motor output is inhibited. Under these conditions, R_{\text{req}} \approx 0: the codec is receiving no correction signal from the external environment. The full bandwidth budget C_{\max} is available for internal operation.

OPT frames this state formally as unconstrained forward-fan exploration: the codec generates trajectories through \mathcal{F}_h(z_t) — the set of admissible future sequences (Eq. 5 of base paper) — without anchoring those trajectories to real incoming data. This is simulation: the codec runs its generative model K_\theta forward in time, unimpeded by reality.

The sampling distribution over the fan is not uniform. Define the importance weight of a branch b \in \mathcal{F}_h(z_t) as:

w(b) := \exp\!\left(\beta\cdot |E(b)|\right) \tag{T9-9}

where \beta is an inverse temperature parameter and E(b) is the emotional valence of the branch, defined as:

E(b) := -\log P_{K_\theta}(b \mid z_t) + \alpha \cdot \mathrm{threat}(b) \tag{T9-10}

The first term -\log P_{K_\theta}(b \mid z_t) is the negative log-probability of the branch under the current codec — its surprise value. The second term \mathrm{threat}(b) is a fitness-relevant consequence measure formally defined as the expected increase in required predictive rate if the codec were to traverse branch b:

\mathrm{threat}(b) := \mathbb{E}\!\left[\, R_{\text{req}}(D_{\min} \mid b) - R_{\text{req}}(D_{\min} \mid z_t)\,\right] \tag{T9-10a}

That is, \mathrm{threat}(b) quantifies the degree to which branch b, if realised in waking life, would push the codec toward or beyond its bandwidth ceiling B_{\max} — through physical harm, social rupture, or narrative collapse that would force costly model revision. Branches with \mathrm{threat}(b) > B_{\max} - R_{\text{req}}(D_{\min} \mid z_t) are existentially threatening: they would violate the Stability Filter condition. The weighting parameter \alpha \geq 0 controls the relative influence of consequence versus surprise in the sampling distribution.

The sampling operator draws branches proportional to w(b):

b_{\text{sample}} \sim \mathcal{F}_h(z_t) \quad \text{with probability} \propto w(b) \tag{T9-11}

This implements importance-weighted forward-fan sampling: the codec disproportionately rehearses branches that are either highly surprising or highly consequential, regardless of their base-rate probability. Low-probability, high-threat branches — precisely those for which the codec is least prepared — receive the greatest sampling attention.

Each sampled branch is then evaluated for coherence under K_\theta. Branches that generate incoherent prediction sequences — where the codec’s own generative model cannot maintain narrative stability — are identified as brittleness points: regions of the forward fan where the codec would fail if the branch were encountered in waking life. The codec can then update P_\theta to reduce K_\theta’s vulnerability at those points, before being exposed to them with real thermodynamic stakes.

Dreaming is therefore adversarial self-testing of the codec at zero risk. The functional consequence is a codec that is systematically better prepared for the low-probability, high-consequence branches of its own forward fan. This OPT framing provides an information-theoretic grounding for Revonsuo’s [46] threat-simulation theory of dreaming, extending it from an evolutionary-functional account to a formal structural necessity: any codec operating under the Stability Filter must periodically stress-test its own forward fan, and the offline maintenance state is the only period when this can be done without real-world thermodynamic cost.

Emotional tagging as a retention weight prior. In the waking state, the emotional valence E(b) computed during REM sampling serves as a prior retention weight biasing the MDL threshold \lambda in (T9-3). Experiences with high |E(b)| — strongly surprising or consequential — are assigned a higher effective \lambda, making them more resistant to pruning in the next maintenance cycle. This is the formal account of emotional memory enhancement: affect is not noise contaminating the memory system; it is the codec’s relevance signal, marking patterns whose predictive value exceeds their base-rate statistical frequency.

3.6.6 The Full Maintenance Cycle and Net Complexity Budget

The three passes of \mathcal{M}_\tau compose sequentially. The net effect on codec complexity across one maintenance cycle of duration \tau is:

K\!\left(P_\theta(t+\tau)\right) = K\!\left(P_\theta(t)\right) - \Delta K_{\text{prune}} - \Delta K_{\text{compress}} + \Delta K_{\text{REM}} \tag{T9-12}

where \Delta K_{\text{REM}} is the small positive increment from patterns newly consolidated from the REM sampling pass — those brittleness-point repairs that required new parameter updates.

For a stable cognitive system operating across years, the long-run budget requires:

\left\langle \Delta K_{\text{prune}} + \Delta K_{\text{compress}} \right\rangle \geq \left\langle \Delta K_{\text{waking}} + \Delta K_{\text{REM}} \right\rangle \tag{T9-13}

where \Delta K_{\text{waking}} is the complexity acquired during the preceding waking period. Inequality (T9-13) is the formal statement that maintenance must keep pace with acquisition. Chronic sleep deprivation, in OPT terms, is not merely fatigue — it is progressive complexity overflow: the codec approaches C_{\text{ceil}} while its pruning and consolidation budget is insufficient to restore headroom.

3.6.7 Empirical Predictions

The Maintenance Cycle framework generates the following testable structural expectations:

Sleep duration scales with codec complexity. Organisms or individuals who acquire more structured information during waking periods should require proportionally longer or deeper maintenance cycles. The prediction is not simply that hard cognitive work requires more sleep (which is established), but that the type of learning matters: pattern-rich, compressible learning should require less consolidation time than unstructured, high-entropy experience, because \Delta K_{\text{compress}} is larger in the former case.

REM content is importance-weighted over the forward fan, not frequency-weighted. Dream content should disproportionately sample low-probability, high-consequence branches relative to their waking frequency. This is consistent with the empirical predominance of threat, social conflict, and novel-environment content in dream reports — the codec samples what it needs to stress-test, not what it most often encounters.

Compression efficiency improves post-sleep proportional to \Delta K_{\text{compress}}. The specific prediction is that post-sleep performance improvements should be largest on tasks requiring structural generalisation (i.e., applying a compressed rule to new instances) rather than simple repetition — because \Delta K_{\text{compress}} specifically reorganises \Theta_{\text{recent}} into more generalisable forms.

Pathological rumination corresponds to REM sampling stuck at high-|E| branches. If the importance-weighting parameter \beta is pathologically elevated, the sampling distribution over \mathcal{F}_h(z_t) concentrates on high-threat branches to the exclusion of repair. The codec spends its maintenance cycle repeatedly sampling the same threatening branches without successfully reducing their surprise value — the formal structure of anxiety and PTSD nightmares.

3.6.8 Relationship to the Phenomenal State Tensor

\mathcal{M}_\tau acts on P_\theta(t) as defined in §3.5: it restructures the standing-state complexity C_{\text{state}} across the maintenance window. The temporal profile of P_\theta(t) under \mathcal{M}_\tau is:

- Waking acquisition: C_{\text{state}} increases at rate bounded by the learning operator \mathcal{U} (Eq. T8-8), as new patterns are incorporated into K_\theta.

- Slow-wave sleep (Passes I–II): C_{\text{state}} decreases as pruning and consolidation recover model capacity.

- REM (Pass III): C_{\text{state}} undergoes selective local increase at brittleness points, with net effect small relative to the reductions of Passes I–II.

The conscious experience corresponding to each phase is consistent with this structure: waking life accumulates the richness of P_\theta(t); slow-wave sleep is phenomenally sparse or absent (consistent with minimal P_\theta(t) activation during structural reorganisation); REM presents a phenomenally vivid but internally generated scene (Pass III running the full generative model forward in the absence of sensory correction).

Summary: New Formal Objects Introduced

| Symbol | Name | Definition | Equation |

|---|---|---|---|

| P_\theta(t) | Phenomenal State Tensor | Full activation of K_\theta at time t, projected through \partial_R A | T8-5 |

| C_{\text{state}}(t) | Standing-state complexity | K(P_\theta(t)), Kolmogorov complexity of active codec | T8-6 |

| \pi_t | Downward prediction | \mathbb{E}_{K_\theta}[X_{\partial_R A}(t) \mid Z_t], the rendered scene | T8-2 |

| \varepsilon_t | Prediction error (upward) | X_{\partial_R A}(t) - \pi_t, novelty signal bounded by C_{\max} | T8-3 |

| \mathcal{M}_\tau | Maintenance Cycle Operator | P_\theta(t) \to P_\theta(t+\tau) under low R_{\text{req}} | T9-2 |

| \Delta_{\mathrm{MDL}}(\theta_i) | MDL retention score | Predictive contribution minus storage cost | T9-3 |

| E(b) | Branch emotional valence | Surprise plus weighted threat of branch b | T9-10 |

| w(b) | Branch importance weight | \exp(\beta \cdot |E(b)|), drives REM sampling distribution | T9-9 |

| \Delta K_{\text{prune}} | Pruning complexity recovery | Bits recovered by forgetting below-threshold components | T9-6 |

| \Delta K_{\text{compress}} | Consolidation compression gain | Bits recovered by MDL recompression of recent acquisitions | T9-8 |

3.7 The Tensor-Network Mapping: Inducing Geometry from Code Distance

The Epistemic Ladder introduced in §3.4 establishes a rigorous Classical Boundary Law (S_{\mathrm{cut}} \sim |\partial_R A|). However, to fully bridge the Ordered Patch Theory strictly to the geometrization of quantum information (e.g., AdS/CFT and the Ryu-Takayanagi formula), we must formally upgrade the structure of the latent code Z_t.

If we formally postulate that the bottleneck mapping q^\star(z \mid X_t) does not simply extract a flat list of features, but operates via a recursive, coarse-graining renormalization group flow, the generative model structurally aligns onto the geometry of a hierarchical tensor network \mathcal{T} (akin to MERA [43] or HaPY networks [44]). (Remark: Appendix T-3 formally derives a structural homomorphic correspondence between the Stability Filter’s coarse-graining cascade and the MERA network geometry bounding, strictly mapping the Informational Causal Cone to the equivalent MERA causal cone). The boundary states of this network are precisely the screened Markov boundary states X_{\partial_R A}. The network \mathcal{T} acts as a bulk geometry whose “depth” represents the layers of computational coarse-graining required to compress the boundary into the minimal bottleneck state Z_t.

Under this tensor-network upgrade, the predictive cut entropy S_{\mathrm{cut}}(A) across the boundary transforms mathematically into the minimum number of tensor bonds that must be severed to isolate the subregion A. Let \chi be the bond dimension of the network. The capacity bound internally maps as:

S_{\mathrm{cut}}(A) \le |\gamma_A| \log \chi \tag{11}

where \gamma_A is the minimal-cut surface through the inner deep layer bulk data structure of \mathcal{T}. This is explicitly a discrete structural analogue of the bulk minimal-cut layer mapped by the Ryu-Takayanagi holographic entropy bound. Appendix P-2 (Theorem P-2d) formally establishes the full discrete quantum RT formula S_{\text{vN}}(\rho_A) \leq |\gamma_A| \log \chi via the Schmidt rank of the MERA state, conditional on the local noise model and QECC embedding derived therein. The continuum limit upgrading this to the full Ryu-Takayanagi formula with bulk correction term remains an open edge.

Crucially, in OPT, this “bulk space” is not a pre-existing physical container. It is the strictly informational metric space of the observer’s codec. The emergent phenomenological spacetime geometry “curves” precisely where the required code distance diverges to resolve overlapping internal causal states. This Tensor-Network formalism illustrates a formal path by which OPT might induce spatial geometry directly from the error-correction distances intrinsically mandated by the Stability Filter, offering a constructive conjecture that holographic spacetime models optimal data-compression formats.

3.8 The Agency Axiom & The Phenomenal Residual

The mathematical apparatus developed in Sections 3.1–3.7 precisely defines the geometry of the observer’s reality—the tensor network, the predictive cut, and the causal cone. However, what is the nature of the primitive interiority that experiences the passage through it? We formally define this via the Agency Axiom: the traversal of the C_{\max} aperture is intrinsically a phenomenological event.

While we take the presence of subjective feeling as axiomatic, Theorem P-4 (The Phenomenal Residual) identifies its rigorous structural correlate. Because the bounded codec actively perturbs the boundary \partial_R A, stable prediction within C_{\max} limits requires it to model the consequences of its own future actions. Thus, the codec K_{\theta} must maintain a predictive self-model \hat{K}_{\theta}. However, by the algorithmic bounds of informational containment [13], a finite computational system cannot contain a complete structural representation of itself; the internal model is rigidly bounded to a lower complexity than the parent codec (K(\hat{K}_{\theta}) < K(K_{\theta})).

This necessitates an irreducible Phenomenal Residual (\Delta_{\text{self}} > 0). This un-modellable residual acts as the computational “blind spot” within the active inference cycle. Because it exists in the informational shadow exceeding the computational reach of the self-model, it is inherently ineffable; because it exists as the localized delta between a specific codec and its model, it is computationally private; and dictated by fundamental limits on self-reference and necessary variational approximation, it is non-eliminable. The topological narrowing at the C_{\max} aperture is intrinsically correlated with the mathematical necessity of an incomplete algorithm undergoing its own boundaries. The math describes the formal contour of the experience, and the Agency Axiom asserts that this residual locus constitutes the subjective “I”. (See Appendix P-4 for the formal derivation).

The Informational Maintenance Circuit

Within a single update frame [t, t+\Delta t], the observer executes the following closed causal circuit:

P_\theta(t) \;\xrightarrow{\ \pi_t\ }\; \partial_R A \;\xrightarrow{\ \varepsilon_t\ }\; Z_t \;\xrightarrow{\ \mathcal{U}\ }\; P_\theta(t+1) \tag{T6-1}

Explicitly:

Prediction (downward): The current tensor P_\theta(t) generates the predicted boundary state \pi_t = \mathbb{E}_{K_\theta}[X_{\partial_R A}(t) \mid Z_t] — the rendered scene.

Error (upward): The actual boundary state X_{\partial_R A}(t) arrives; the prediction error \varepsilon_t = X_{\partial_R A}(t) - \pi_t is computed.

Compression: \varepsilon_t is passed through the bottleneck to yield Z_t, the capacity-limited update token, with I(\varepsilon_t\,;\,Z_t) \leq B_{\max}.

Update: The learning operator \mathcal{U}(P_\theta(t), \varepsilon_t, Z_t) revises P_\theta(t+1), selectively modifying only those regions of the tensor implicated by \varepsilon_t.

Action: Simultaneously, P_\theta(t) selects action a_t via active inference descent on the variational free energy \mathcal{F}[q,\theta] (Eq. 9 of base paper), which alters the sensory boundary at t+1, influencing the next \varepsilon_{t+1}.

Interpretive note on the action step. The language of step 5 — “selects action” and “alters the sensory boundary” — is inherited from the Free Energy Principle’s standard active inference formalism, which assumes a physical environment that the agent pushes against via active states. Under OPT’s native render ontology (§8.6), a deeper reading applies: there is no independent external world against which the codec exerts force. What is experienced as “action” is a branch selection within the Forward Fan \mathcal{F}_h(z_t); the physical consequences of that selection arrive as subsequent input \varepsilon_{t+1}. The Markov blanket \partial_R A is not a two-way physical interface but the surface across which the selected branch delivers its next segment. This interpretive shift changes nothing in the mathematics of (T6-1)–(T6-3); it clarifies the ontological status of the action step within OPT’s framework. The mechanism of branch selection itself is addressed below.

This is the within-frame informational maintenance circuit: a closed causal mechanism in which the system’s internal model calculates localized structural predictions bounding boundary gradients, reads the error, and selectively updates itself. The loop is strictly informational and self-referential in the formal sense: P_\theta(t) determines both the structural prediction \pi_t and, via action a_t, a predictive component of the next sequential data stream input X_{\partial_R A}(t+1). (Note explicitly: this purely statistical screening layer is defined rigorously by informational Markov boundaries decoupling dynamics cleanly, differing inherently from complex biological autopoiesis where cell structures mechanically manufacture their own organic mass networks).

The Structural Viability Condition

The circuit (T6-1) is structurally viable if and only if it can sustain itself without the codec’s informational complexity exceeding its local runability limits. Formally:

K\!\left(P_\theta(t)\right) \leq C_{\text{ceil}} \quad \forall\, t \tag{T6-2}

where C_{\text{ceil}} is a heuristic parameter bounding the maximum structural complexity the codec can sustain. In principle, C_{\text{ceil}} should be derivable from the organism’s thermodynamic budget via Landauer’s principle (see the sketch in §3.10), but the full derivation chain — from metabolic power to erasure cost to maximum sustainable program complexity — is not yet formalised within OPT. C_{\text{ceil}} therefore remains an empirically motivated but formally underdetermined bound. A system satisfying (T6-2) operates as a structurally closed observer in OPT’s formal sense.

When (T6-2) is violated — when K(P_\theta(t)) \to C_{\text{ceil}} — the codec cannot maintain stable predictions across \mathcal{F}_h(z_t), R_{\text{req}} begins to exceed B_{\max}, and the Stability Filter condition fails. Narrative coherence collapses: the observer exits the set of observer-compatible streams.

The Maintenance Cycle \mathcal{M}_\tau (§3.6) is the mechanism that enforces (T6-2) over deep time, keeping K(P_\theta) within bounds via pruning, consolidation, and forward-fan stress-testing. Within-frame, (T6-2) is maintained by the selectivity of \mathcal{U}: the update operator modifies only the regions of P_\theta(t) implicated by \varepsilon_t, avoiding gratuitous complexity growth per frame.

Agency as Constrained Free Energy Minimisation

Within this structure, agency can be given a precise formal definition that is compatible with — but not reductive of — the Agency Axiom.

At the systems level, agency is the selection of action sequence \{a_t\} that minimises expected variational free energy subject to the informational viability condition:

a_t^\star = \arg\min_{a_t} \;\mathbb{E}\!\left[\mathcal{F}[q, \theta]\right] \quad \text{subject to} \quad K\!\left(P_\theta(t)\right) \leq C_{\text{ceil}} \tag{T6-3}

This is constrained active inference: the observer navigates the forward fan \mathcal{F}_h(z_t) not merely to minimise prediction error, but to minimise prediction error while keeping the codec viable. Branches that would temporarily reduce \varepsilon but drive K(P_\theta) toward C_{\text{ceil}} are penalised by the constraint. The observer preferentially selects branches along which it can continue to exist as a coherent observer.

This is the formal content of the intuition that agency is self-preserving navigation: the codec selects the branches of the forward fan along which it can continue to compress the world.

At the phenomenological level, the Agency Axiom remains untouched: phenomenal consciousness is the irreducible interiority of aperture-traversal; (T6-3) describes the structural shadow that traversal casts, not its inner nature.

Branch Selection as \Delta_{\text{self}} Execution

The constrained active inference formula (T6-3) specifies the objective of branch selection: minimise expected free energy subject to viability. The self-model \hat{K}_\theta evaluates branches of the Forward Fan by simulating their consequences. But Theorem P-4 establishes that K(\hat{K}_\theta) < K(K_\theta) — the self-model is necessarily incomplete. This incompleteness has a direct consequence for the branch selection problem: the self-model constrains the region from which selection can be drawn, but cannot fully specify the selection itself.

The actual moment of branch selection — the transition from the evaluated menu to the singular trajectory that enters the causal record — occurs in \Delta_{\text{self}}, the informational residual between the codec and its self-model. This is not a gap in the formalism; it is a structural necessity. Any attempt to fully specify the selection mechanism from within would require K(\hat{K}_\theta) = K(K_\theta), which P-4 proves is impossible for any finite self-referential system.

This has three immediate consequences:

Will and consciousness share the same structural address. The Hard Problem (why does traversal feel like something?) and the branch selection problem (what selects?) both point to \Delta_{\text{self}}. They are not two mysteries but two aspects of the same structural feature — the unmodelable gap between what the codec is and what it can model about itself.

The irreducibility of agency is explained, not merely asserted. The phenomenological experience of will — the irreducible sense that I chose — is the first-person signature of a process executing in the observer’s own blind spot. Any theory claiming to fully specify the selection mechanism has either eliminated \Delta_{\text{self}} (making the system a fully self-transparent automaton, which P-4 forbids) or is describing the self-model’s evaluation of branches and mistaking it for the selection itself.

Creativity as expanded \Delta_{\text{self}}. Near-threshold operation (R_{\text{req}} \to C_{\max}) strains the self-model’s capacity, effectively expanding the region of \Delta_{\text{self}} from which selection is drawn. This produces branch selections that are less predictable from the self-model’s perspective — experienced as creative insight, spontaneity, or “flow.” Conversely, the hypnagogic state (§3.6.5) relaxes the self-model from below, achieving the same expansion by a complementary route.

The self as residual. The experienced self — the continuous narrative of “who I am,” with stable preferences, a history, and a projected future — is \hat{K}_\theta’s running model of K_\theta: a compressed approximation that is always behind the codec it models (by the temporal lag inherent in self-reference). But the actual locus of experience, selection, and identity is \Delta_{\text{self}}: the part of the codec the narrative cannot reach. The self you know is your model of yourself; the self that knows is the gap the model cannot cross. This is the formal content of the contemplative discovery — across traditions, independently — that the ordinary sense of self is constructed and that beneath it is something that cannot be found as an object (see Appendix T-13, Corollary T-13c).

Deliberation is real but incomplete. The self-model’s evaluation of the Forward Fan is a genuine computational process that shapes the outcome. Deliberation constrains the basin of attraction within which \Delta_{\text{self}} operates: a more developed codec narrows the viable branches that selection can land on. But the final transition — why this branch rather than that one, among the viable set — is structurally opaque to the deliberating self. This is why deliberation feels both causally efficacious and phenomenologically incomplete: the observer correctly senses that its reasoning matters, but also correctly senses that something beyond the reasoning finalises the choice.

The Strange Loop as Formal Closure

The self-referential structure of (T6-1) instantiates Hofstadter’s [45] Strange Loop in a precise information-theoretic form. The loop is strange in the following sense: P_\theta(t) contains, as a substructure, a model of the codec’s own future states — the forward-fan sampling of Pass III (\mathcal{M}_\tau, §3.6.5) is precisely the codec running a simulation of itself encountering future branches. The system models its own model.

The formal closure this provides: the informationally closed observer is not merely a system that maintains a boundary against external noise; it is a system whose boundary-maintenance is partly constituted by its model of what that boundary needs to be in the future. The strange loop is not an optional add-on to the framework; it is the structural mechanism by which the viability condition (T6-2) is enforced proactively rather than reactively. An observer that could not simulate its own future codec states could not prepare for the brittleness points identified in Pass III, and would be systematically more vulnerable to narrative collapse.

The structural requirements of (T6-1)–(T6-3) function as necessary preconditions for self-referential closure. While simple forward prediction (e.g., a chess engine’s look-ahead) constitutes planning rather than genuine self-reference, the OPT codec goes further: P_\theta(t) contains a sub-model whose output modifies the distributions governing its own future states \{P_\theta(t+h)\}_{h>0}. This structural self-modeling is functionally necessary for long-run stability — a codec unable to anticipate its own approaching viability limits cannot prepare for the brittleness points identified in Pass III (§3.6.5), and will systematically collapse against the (T6-2) ceiling in non-stationary environments.

Epistemic Scope: Formally Scoping Agency Reductionism

This formalisation precisely delineates what OPT achieves at the systems level: it identifies the structural conditions an observer must satisfy to maintain boundary viability. This Formally Scopes the Agency Reductionism Problem without claiming to resolve it.

The scoping is genuine, not definitional. The systems-level description (T6-1)–(T6-3) exhaustively characterises the structural shadow of agency — the information-theoretic constraints any boundary-maintaining observer must satisfy. The Agency Axiom occupies the complementary domain: phenomenal consciousness is the irreducible interiority of aperture-traversal, and the formalisation above describes only the shape of the container, not the nature of what it contains. The Hard Problem is thereby located at a precise structural locus (the C_{\max} aperture) rather than dissolved or declared solved.

3.9 Free Will and the Phenomenological Menu

The isolation of the traversal mechanism fundamentally clarifies the nature of agency. In the Active Inference loop (Equation 9), the observer must execute a policy sequence \{a_t\}. Under reductive physicalism, the selection of the action a_t is determined (or randomly sampled) by the underlying physics, rendering free will an illusion or a mere linguistic redefinition.

OPT reverses this dependency. Because the localized “physics” of the patch is merely the generative model’s predictive estimation of the substrate, the physical laws only constrain the Forward Fan \mathcal{F}_h(z_t) to a set of macroscopic probabilities. Crucially, unless the patch is a perfectly predictable automaton (which violates the thermodynamic requirement for generative structural complexity), the Forward Fan contains genuine, unresolved branch multiplicity from the observer’s limited perspective.

Since the descriptive physics merely outlines the menu of these valid branches, it cannot logically experience the selection. On the compatibilist reading developed further in §8.6, the branch path is mathematically fixed in the timeless substrate; selection is the phenomenological experience of traversal. From the third-person perspective (the outside geometry), branch-selection appears as spontaneous noise, quantum collapse, or statistical fluctuation. From the first-person internal perspective, the boundaries of uncertainty guarantee that the traversal is experienced as the exertion of Will—the primitive action of navigating the uncompressed frontier. In OPT, free will is not a contra-causal breach of physical law; it is the necessary phenomenological openness experienced by a bounded observer collapsing a formal menu into a singular rendered timeline.

The render-ontology sharpening. Under OPT’s native ontology (§8.6), the distinction between perception and action dissolves at the substrate level. What is experienced as “output” — reaching, deciding, choosing — is stream content that the codec is navigating. The codec does not act on the world; it traverses a branch of \mathcal{F}_h(z_t) in which the experience of acting is part of what arrives at the boundary. What the Free Energy Principle calls active states — the outward flow modifying the environment — are, in OPT’s render ontology, the codec’s branch selection expressing itself as subsequent input content. The Markov blanket is the surface across which the selected branch delivers its next segment, not a membrane through which the observer pushes against an external reality. This sharpens the compatibilist account: there is no distinction between perceived and willed at the substrate level; both are stream content; the phenomenological distinction arises from how P_\theta(t) tags certain content as “self-initiated” — a tagging whose mechanism, like all branch selection, ultimately executes in \Delta_{\text{self}} (§3.8).

3.10 The Informational Cost of the Render and the Three-Level Bound Gap

The defining mathematical boundary of the Ordered Patch Theory is the formal comparison of informational generating costs.

Let U_{\text{obj}} be the full informational state of an objective universe. The Kolmogorov complexity K(U_{\text{obj}}) is astronomically high. Let S_{\text{obs}} be the localized, low-bandwidth stream experienced by an observer (strictly bounded by the \mathcal{O}(10) bits/s threshold). In OPT, the universe U_{\text{obj}} does not exist as a rendered computational object. The apparent “objective universe” is instead the internal Generative Model constructed by Active Inference.

The Bekenstein Bound for a Biologically Realistic Observer

The Bekenstein bound [40] gives the maximum thermodynamic entropy — equivalently, the maximum information content — of any physical system bounded by radius R with total energy E:

S_{\text{Bek}} \leq \frac{2\pi R E}{\hbar c} \tag{T7-1}

For a human brain as the observer’s Markov Blanket boundary \partial_R A:

- Bounding radius: R \approx 0.07\ \text{m}

- Total rest-mass energy: E = m c^2 \approx 1.4\ \text{kg} \times (3 \times 10^8\ \text{m/s})^2 = 1.26 \times 10^{17}\ \text{J}

- Reduced Planck constant: \hbar = 1.055 \times 10^{-34}\ \text{J}\cdot\text{s}

- Speed of light: c = 3 \times 10^8\ \text{m/s}

Substituting:

S_{\text{Bek}} = \frac{2\pi \times 0.07 \times 1.26 \times 10^{17}}{1.055 \times 10^{-34} \times 3 \times 10^8} = \frac{5.54 \times 10^{16}}{3.17 \times 10^{-26}} \approx 1.75 \times 10^{42}\ \text{nats} \tag{T7-2}

Converting to bits (dividing by \ln 2):

S_{\text{Bek}} \approx 2.52 \times 10^{42}\ \text{bits} \tag{T7-3}

The holographic area bound, S \leq A / 4l_P^2, yields a larger figure. For a sphere of radius R = 0.07\ \text{m}, surface area A = 4\pi R^2 \approx 0.062\ \text{m}^2, and Planck length l_P = 1.616 \times 10^{-35}\ \text{m}:

S_{\text{holo}} = \frac{0.062}{4 \times (1.616 \times 10^{-35})^2} = \frac{0.062}{1.044 \times 10^{-69}} \approx 5.9 \times 10^{67}\ \text{bits} \tag{T7-4}

We adopt the formulation bounded by (T7-3) tracking explicitly S_{\text{phys}} \approx 2.5 \times 10^{42}\ \text{bits} for the structural framework of this analysis. We explicitly flag structurally that using the total rest-mass energy E=mc^2 inflates this metric to an extreme maximal upper limit; active internal biological thermodynamic interactions utilizing purely internal chemical energy bounds (\sim 10-100\text{J}) drop this Bekenstein limit dramatically closer to \sim 10^{26} bits. The qualitative structural gap mechanism formally demonstrated below holds equivalently utilizing any parameter formulation of these physical upper bounds across all margins, acting formally as a conservative limit holding a fortiori against extreme pure geometric Holographic equivalents mapped previously (T7-4).

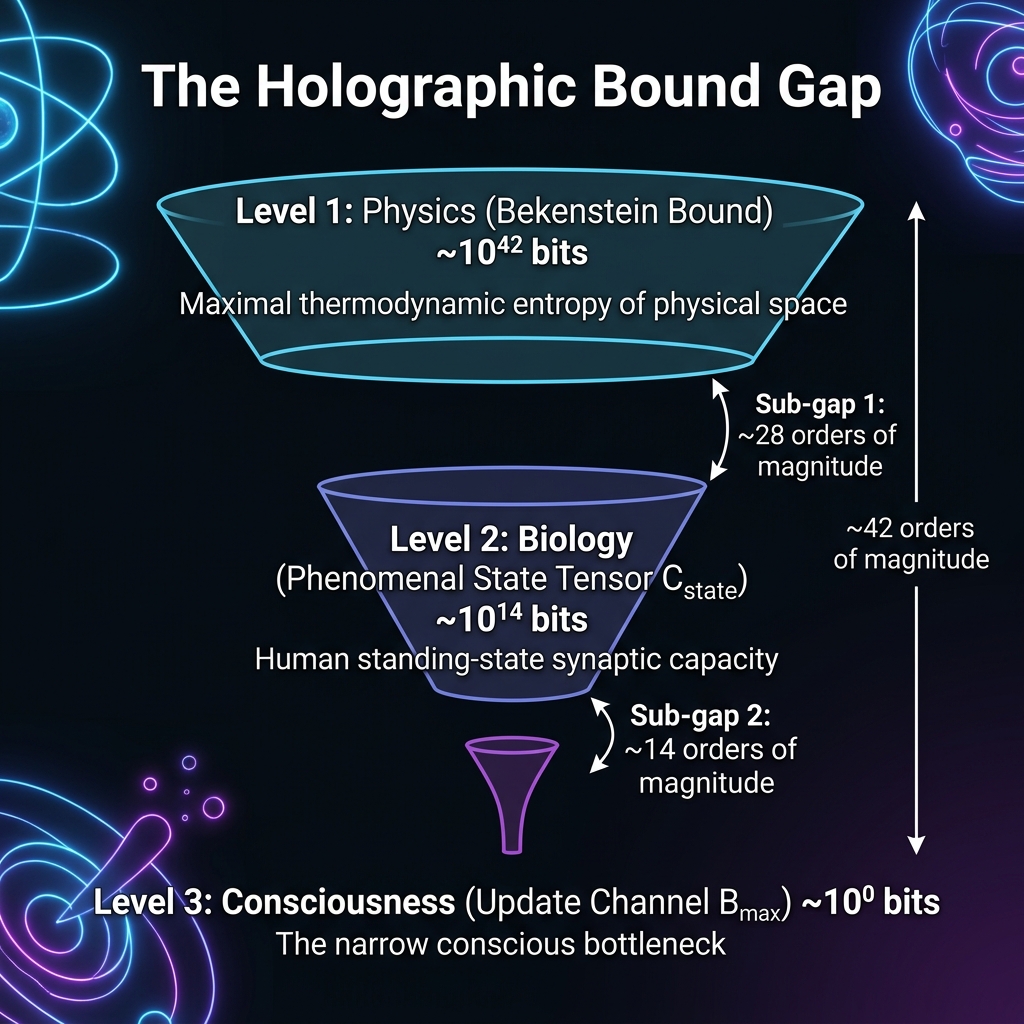

The Three-Level Gap

The Phenomenal State Tensor P_\theta(t) introduced in §3.5 identifies a physically meaningful intermediate scale between the physics bound S_{\text{phys}} and the update channel B_{\max}. We now have three distinct quantities at three distinct scales:

Level 1 — Physics: S_{\text{phys}} \approx 2.5 \times 10^{42}\ \text{bits} (Bekenstein bound, Eq. T7-3)

Level 2 — Biology: C_{\text{state}} = K(P_\theta(t)), the Kolmogorov complexity of the active generative model. We estimate the maximum viable heuristic upper bound from the physiological synaptic information limit: human systems carry roughly 1.5 \times 10^{14} synapses utilizing 4–5 bits of encoding precision [48], projecting a raw structural capacity limit between \sim 10^{14}–10^{15} bits. Rather than inserting an unaccounted empirical fraction modeling ‘active state’ subsets unsupported by hard derivations, we rigorously adopt the full conservative maximum physiological standing threshold natively:

C_{\text{state}} \lesssim 10^{14}\ \text{bits} \tag{T7-5}

acknowledging explicitly this marks an extreme upper bounding limit covering the total deployed synaptic framework capacity supporting the codec.

Level 3 — Consciousness: B_{\max} = C_{\max} \cdot \Delta t \approx 10\ \text{bits/s} \times 0.05\ \text{s} = 0.5\ \text{bits} per cognitive moment (Eq. T8-1).

The three-level gap relation holds natively as:

\underbrace{S_{\text{phys}}}_{\approx 10^{42}} \;\gg\; \underbrace{C_{\text{state}}}_{\lesssim 10^{14}} \;\gg\; \underbrace{B_{\max}}_{\approx 10^{0}} \tag{T7-6}

yielding verified structural sub-gaps:

\frac{S_{\text{phys}}}{C_{\text{state}}} \approx \frac{2.5 \times 10^{42}}{10^{14}} = 2.5 \times 10^{28} \quad (\sim 28\ \text{orders of magnitude}) \tag{T7-7}

\frac{C_{\text{state}}}{B_{\max}} \approx \frac{10^{14}}{0.5} = 2 \times 10^{14} \quad (\sim 14\ \text{orders of magnitude}) \tag{T7-8}

\frac{S_{\text{phys}}}{B_{\max}} \approx 5 \times 10^{42} \quad (\sim 42\ \text{orders of magnitude}) \tag{T7-9}

The total gap of ~42 orders confirms and sharpens the informal claim of §3.8 of the base paper.

The Two-Stage Compression Argument

The three-level structure is not merely refined accounting. Each sub-gap is explained by a distinct causal mechanism:

Sub-gap 1 (S_{\text{phys}} \gg C_{\text{state}}, \sim 28 orders of magnitude): Thermodynamic constraints prevent biological systems from approaching the Bekenstein limit. The generative model satisfies K(P_\theta(t)) \leq C_{\text{ceil}} (Eq. T6-2). A rough estimate of C_{\text{ceil}} follows from Landauer’s principle: each irreversible bit operation dissipates at least k_B T \ln 2 joules at temperature T. For a human brain operating at metabolic power P \sim 20 W, body temperature T \sim 310 K, and an operational update frequency f_{\text{op}} \sim 10^3 Hz, the maximum sustainable model complexity per cycle is:

C_{\text{ceil}} \sim \frac{P_{\text{metabolic}}}{k_B T \ln 2 \cdot f_{\text{op}}} \sim \frac{20}{3 \times 10^{-21} \times 10^3} \sim 10^{22}\ \text{bits}

This Landauer ceiling lies 20 orders of magnitude below the Bekenstein bound — confirming that the physics limit is irrelevant to biological operating points. Note that the C_{\text{ceil}} \sim 10^{22} estimate lies well above the observed synaptic capacity (\sim 10^{14}–10^{15} bits), suggesting that biological systems operate far below even their own thermodynamic ceiling, likely due to additional constraints (wiring cost, metabolic efficiency, evolutionary history) that OPT does not model.