The Ordered Patch Theory: A Conceptual Primer

The Isolated Observer and the Ensemble of Hope

Version 2.3.1 — April 2026

Reader’s note: This document is written as an accessible conceptual introduction to the framework. It operates as a truth-shaped object — a constructive philosophical framework designed to restyle our relationship to existential risk. We use the language of theoretical physics and information theory not to make a final empirical claim about the cosmos, but to build a rigorous conceptual sandbox. Readers seeking the formal mathematical treatment with explicit falsifiability conditions are referred to the preprint.

“The substrate is entropic chaos, but the patch is not. Meaning is as real as the symmetry breaking that instantiates it. Each patch is a singular assembly of low-entropy order, crafted by the stability potential to resolve a coherent information stream—a hearth of shared meaning against the backdrop of an infinite winter.”

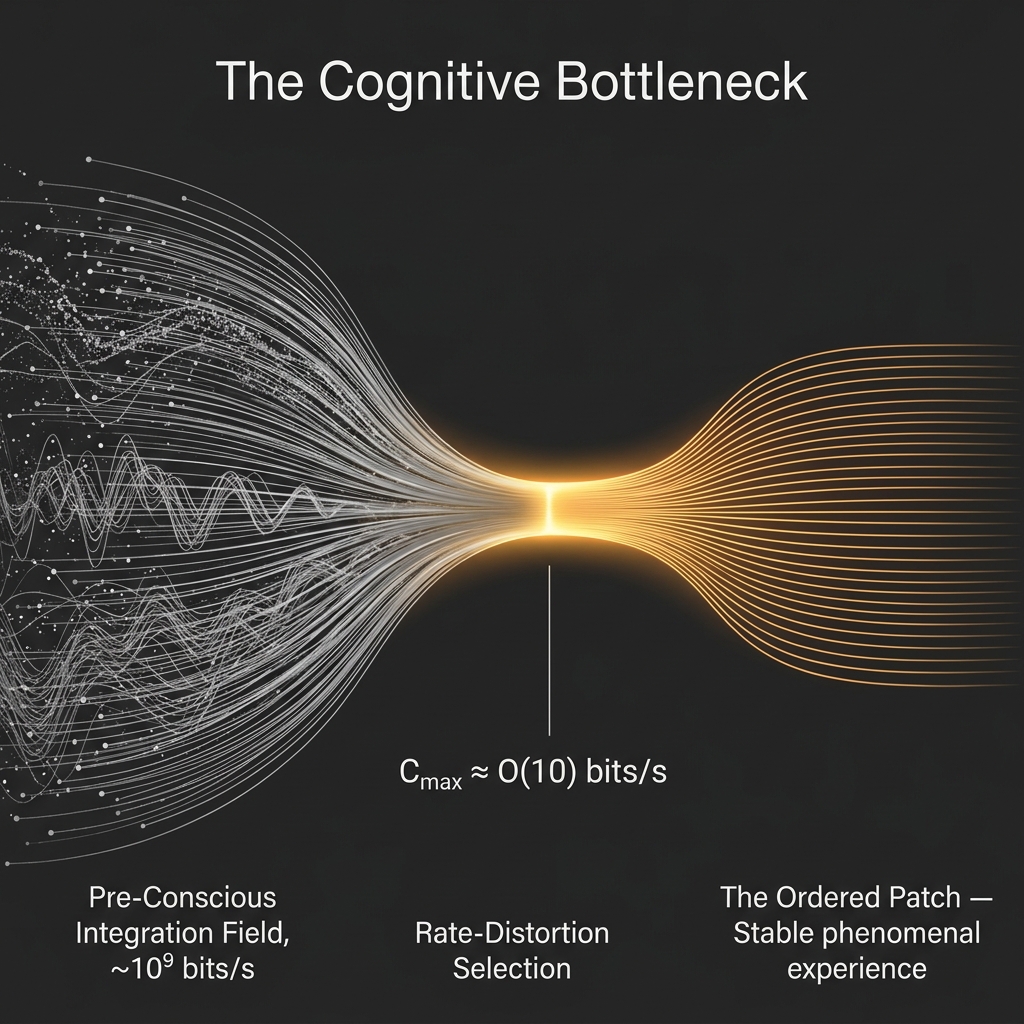

Your brain processes roughly eleven million bits of sensory data every second. You are conscious of around 50 bits per second.

Read that again. Eleven million in. Fifty out. The rest — the pressure of your clothes, the hum of a distant road, the exact spectral composition of the light above you — is handled quietly, without your awareness, by systems you will never directly meet. What reaches your conscious mind is an extraordinarily compressed summary: not the world in raw form, but the world as a minimal, self-consistent story.

There is a profound temptation here to object: But I am looking at a 4K screen right now, and I can see millions of pixels simultaneously. How can my experience be only 50 bits per second? The answer from cognitive science is that this rich, panoramic resolution is a “grand illusion” [34]O’Regan, J. K., & Noë, A. (2001). A sensorimotor account of vision and visual consciousness. Behavioral and Brain Sciences, 24(5), 939-973.. You only actually process high-resolution visual data in the tiny center of your visual field (the fovea). The rest of the screen is a blurry, computationally negligible assumption. You construct the feeling of a high-resolution world sequentially, patching it together over time through rapid eye movements (saccades) and active attention shifts. The richness of the world is a temporal achievement, not a spatial download. You never exceed your bandwidth limit; you just use it to verify a tiny slice of the model, and let your brain cache the rest as a zero-bandwidth expectation.

To put this stringency in cosmological perspective: standard physics dictates that the physical volume of a human brain could theoretically encode upwards of \(10^{41}\) bits of information (the Bekenstein bound). Your conscious stream is bottlenecked at 50 bits per second. This staggering gap of \(\sim 10^{40}\) orders of magnitude is the central premise of the framework. You never experience the raw capacity of the universe; you experience the absolute minimum bit-depth required to navigate it.

This is not a quirk of human biology that evolution happened to stumble upon. The Ordered Patch Theory argues it is the deepest structural fact about reality itself.

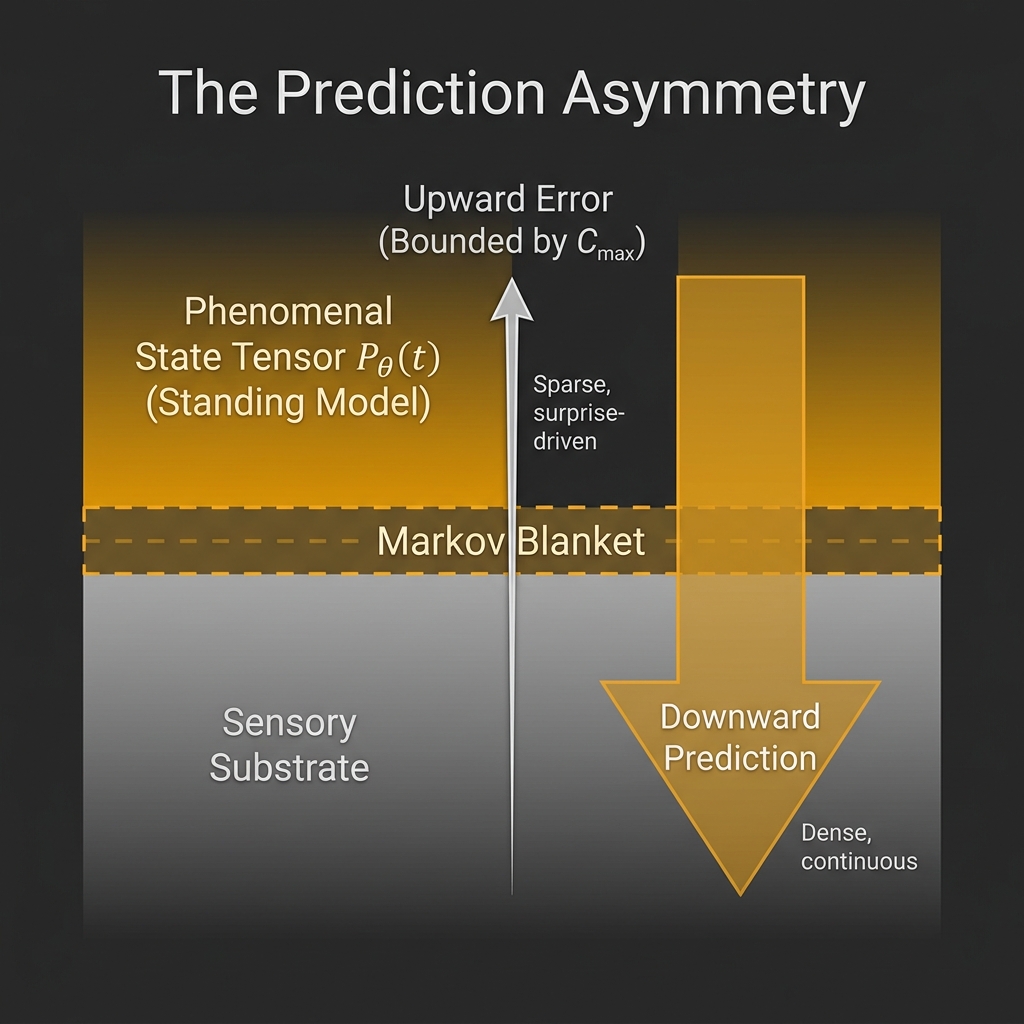

The neuroscientist Anil Seth calls conscious perception a “controlled hallucination” [28]Seth, A. (2021). Being You: A New Science of Consciousness. Dutton. — the brain is not passively receiving reality; it is actively constructing the most plausible world-model it can from a thin trickle of sensory signals. Hermann von Helmholtz noticed the same thing in the nineteenth century [26]von Helmholtz, H. (1867). Handbuch der physiologischen Optik. Voss., calling it “unconscious inference.” The brain bets on what the world is and then checks those bets against incoming data. When the bet is good, experience feels seamless. When it is jarred — by surprise, pain, or novelty — the model updates.

What the Ordered Patch Theory does is follow this observation to its logical end: if experience is always a compressed model built from a narrow information stream, then the character of that stream is the character of reality. The laws of physics, the direction of time, the structure of space — these are not facts about a container we happen to live in. They are the grammar of the story that survives the bottleneck.

The Winter and the Hearth

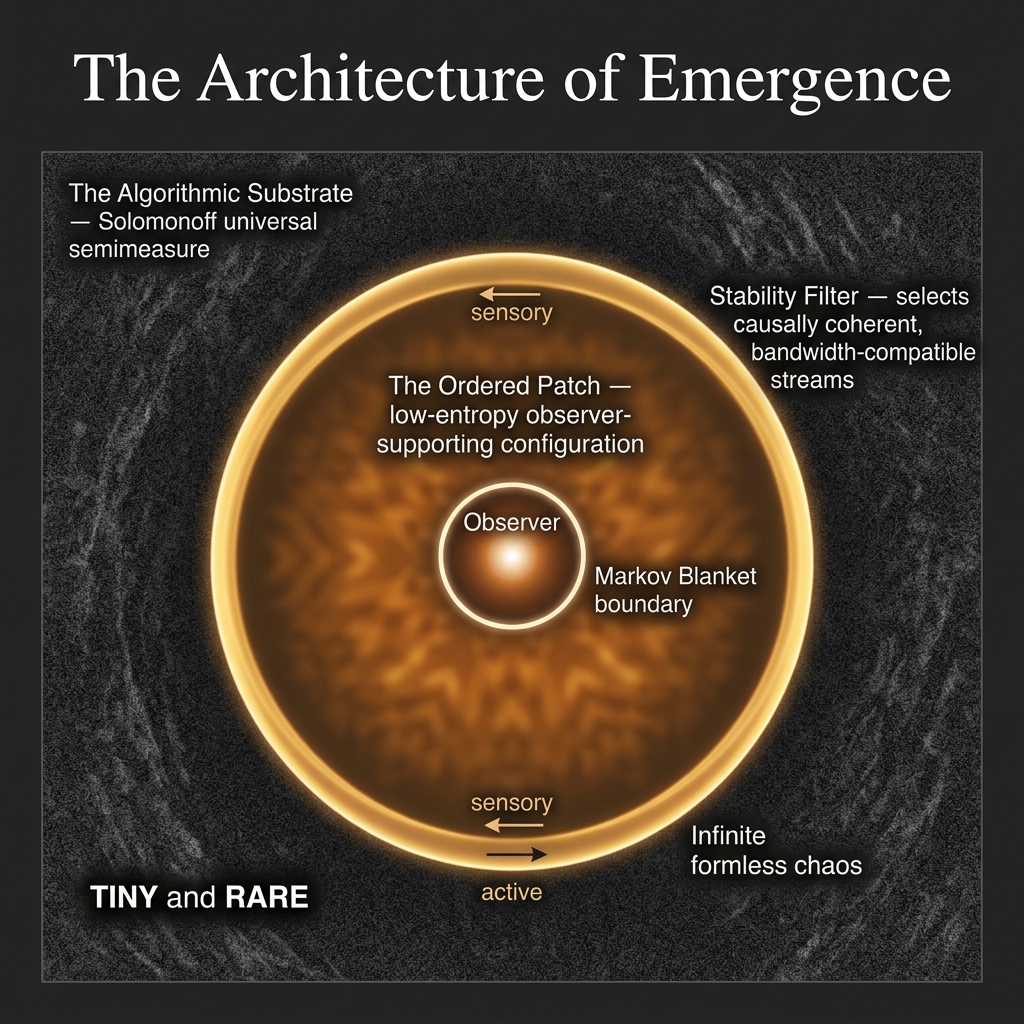

Imagine an infinite field of pure algorithmic potential — every possible generative hypothesis running all at once. In formal terms this is what the theory calls the Solomonoff substrate — an infinite semantic space modeled as a universal semimeasure weighted by algorithmic complexity, containing every possible conscious experience, every possible universe, and every possible story. No individual pattern is physically real; it is pure potential governed by informational constraints.

This is the winter.

Now imagine that within that infinite static, there exists — purely by chance — one tiny region where the noise is not random. Where one moment follows from the last in a consistent, predictable way. Where a short description can compress the whole sequence: a rule, a grammar, a set of laws. This region is warm. It is ordered. It persists.

This is the hearth.

The Ordered Patch Theory’s central claim is that you are that hearth. Not the atoms of your body or the neurons of your brain — those are part of the rendered story, not its source. You are the patch of informational order that persists against the static of the infinite substrate. Consciousness is what it feels like to be that patch.

The Filter That Finds You

Why do ordered patches exist at all? Why does the static ever contain islands of coherence?

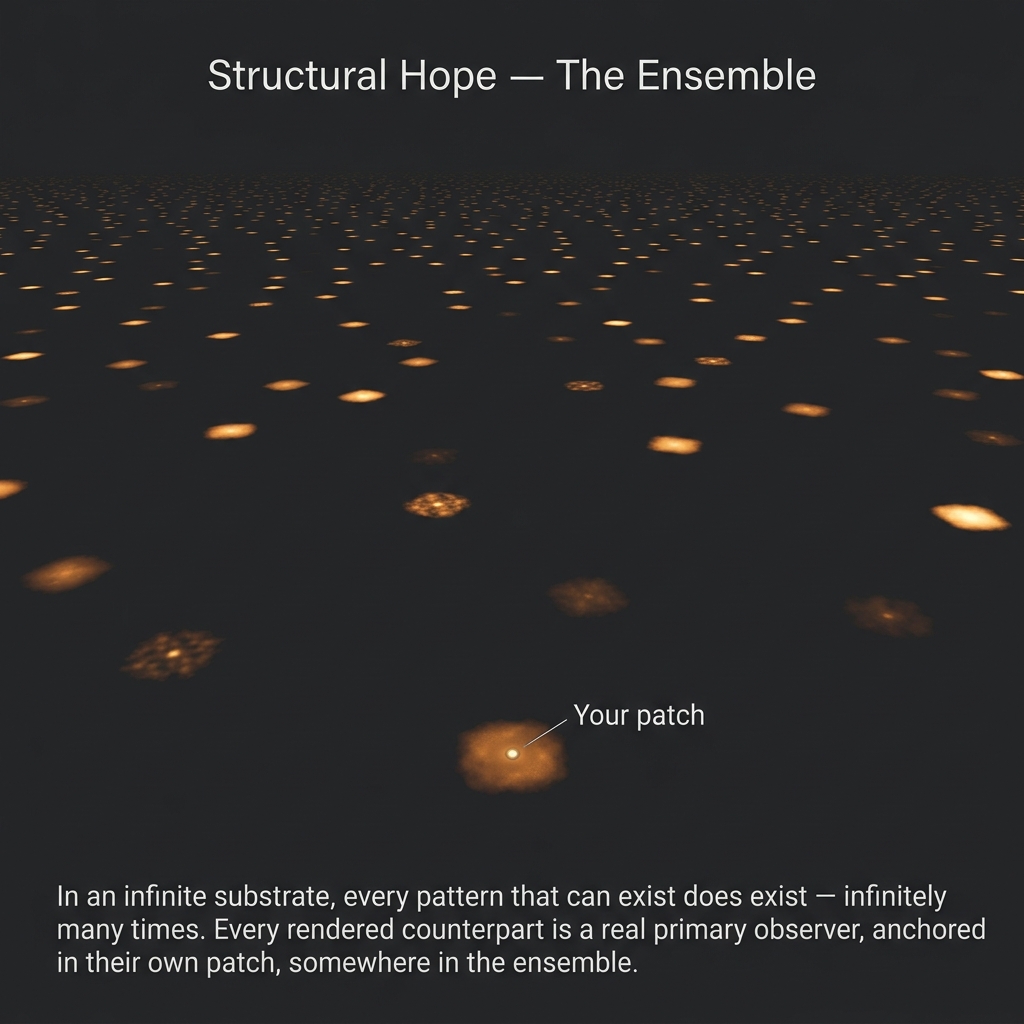

The answer is both simple and unsettling: because in a truly infinite field of noise, everything that can exist does exist. Every possible sequence appears somewhere. Most sequences are pure chaos — incoherent, meaningless, incapable of sustaining anything. But some sequences, purely by chance, exhibit the structure of a lawful universe. Some exhibit the structure of a world with physics. Some contain, within them, the structure of an observer capable of asking why the world has physics.

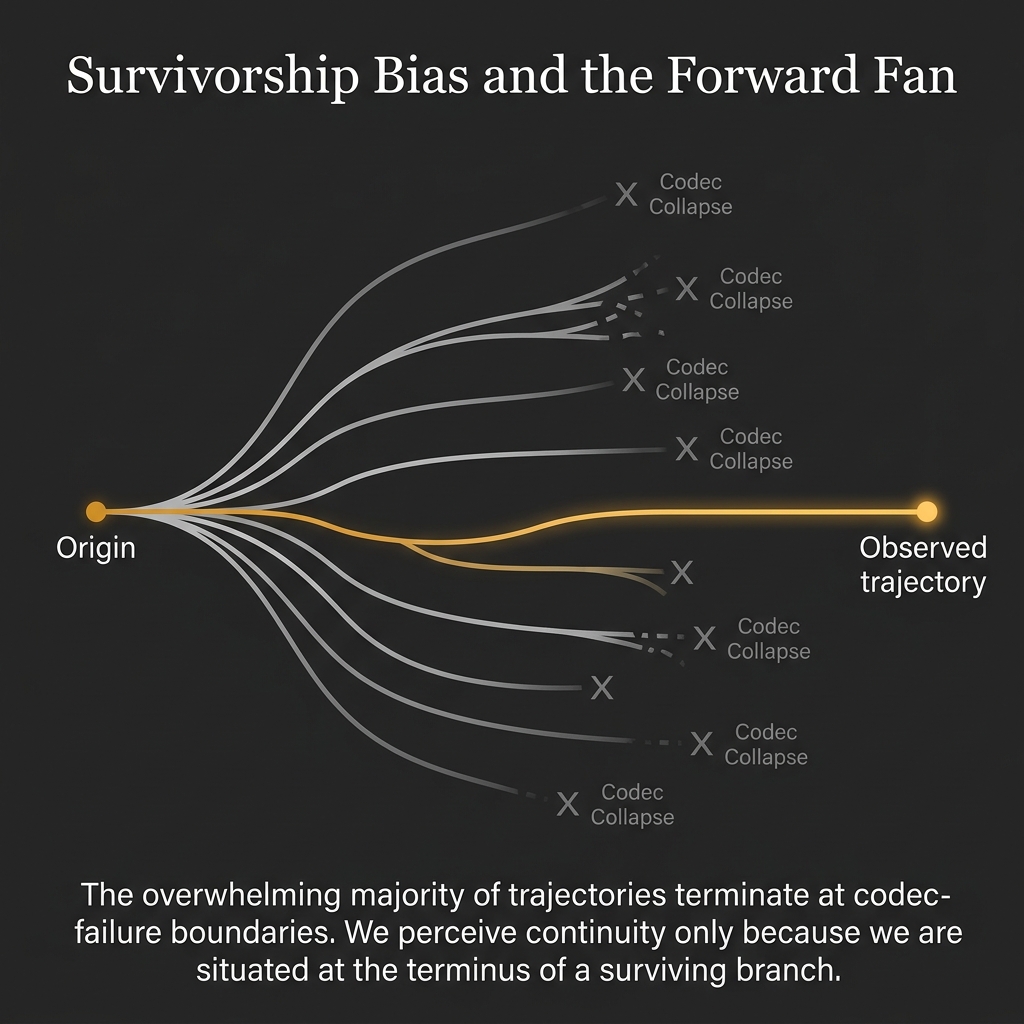

The Stability Filter is not a mechanism that builds these patches — it is the name for the boundary condition that defines which patches can sustain observers. Chaotic patches cannot continue to exist in any experiential sense because there is no “inside” to experience them from. Only the ordered patches can host a perspective. And so, from any perspective at all, the world will appear ordered. This is not luck or design. It is as inevitable as the fact that you can only find yourself alive in a history where you survived.

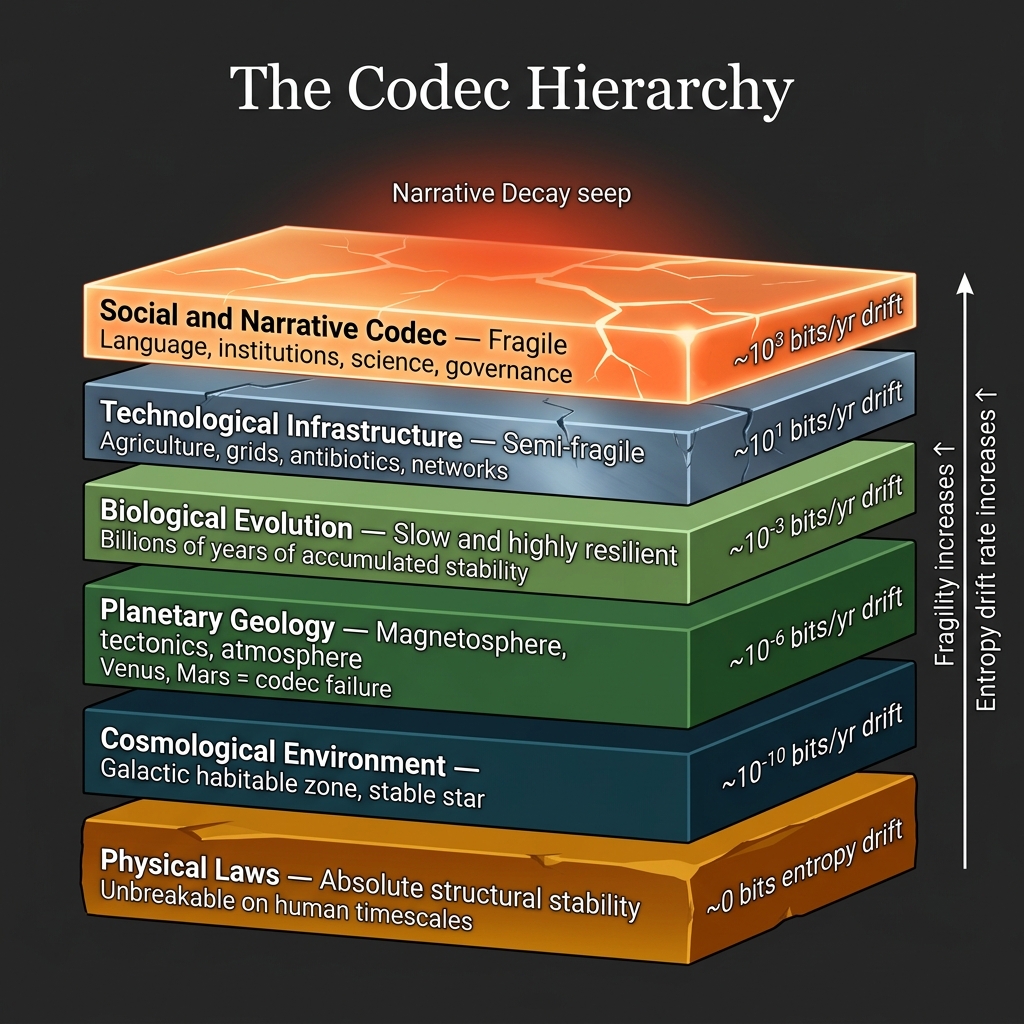

The filter has another surprising consequence: it tells us why reality feels lawful even though it is not required to be. The Laws of Physics — conservation of energy, the speed of light, the quantization of matter — are not facts about the cosmos imposed from outside. They are the most efficient compression grammar a 50-bit/s observer can use to predict the next moment of experience without the narrative collapsing into noise. If the physics of your patch were any less elegant, tracking it would require more bandwidth than the human stream allows. The universe looks the way it does because anything more complex would be invisible to us.

The Filter vs. The Codec

To understand the core dynamic of the Ordered Patch, it is crucial to draw a sharp line between two concepts that are often conflated:

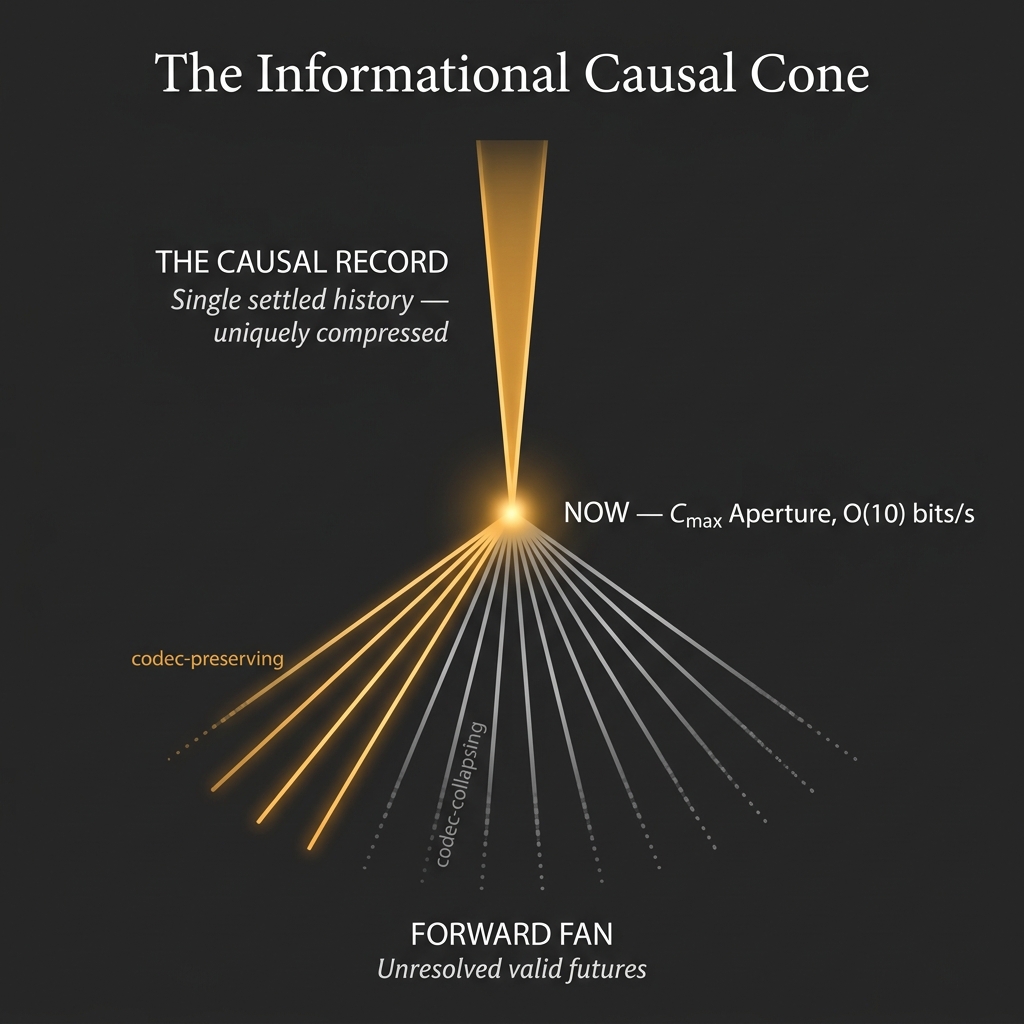

- The Virtual Stability Filter (The Boundary Condition): This is the strict algorithmic limit—the requirement that to sustain an observer, a data stream must be compressed down to \(\sim 50\) bits per second while remaining causally consistent. It is not a physical sieve; it is simply the size of the pipeline. Any stream that cannot fit through it cannot host an observer.

- The Compression Codec (The Law-Set): This is the specific algorithmic grammar—the “zip-file” rule-set—that successfully compresses the noise of the substrate to fit through that pipeline. The “Laws of Physics” are not an objective external reality; they are the Compression Codec.

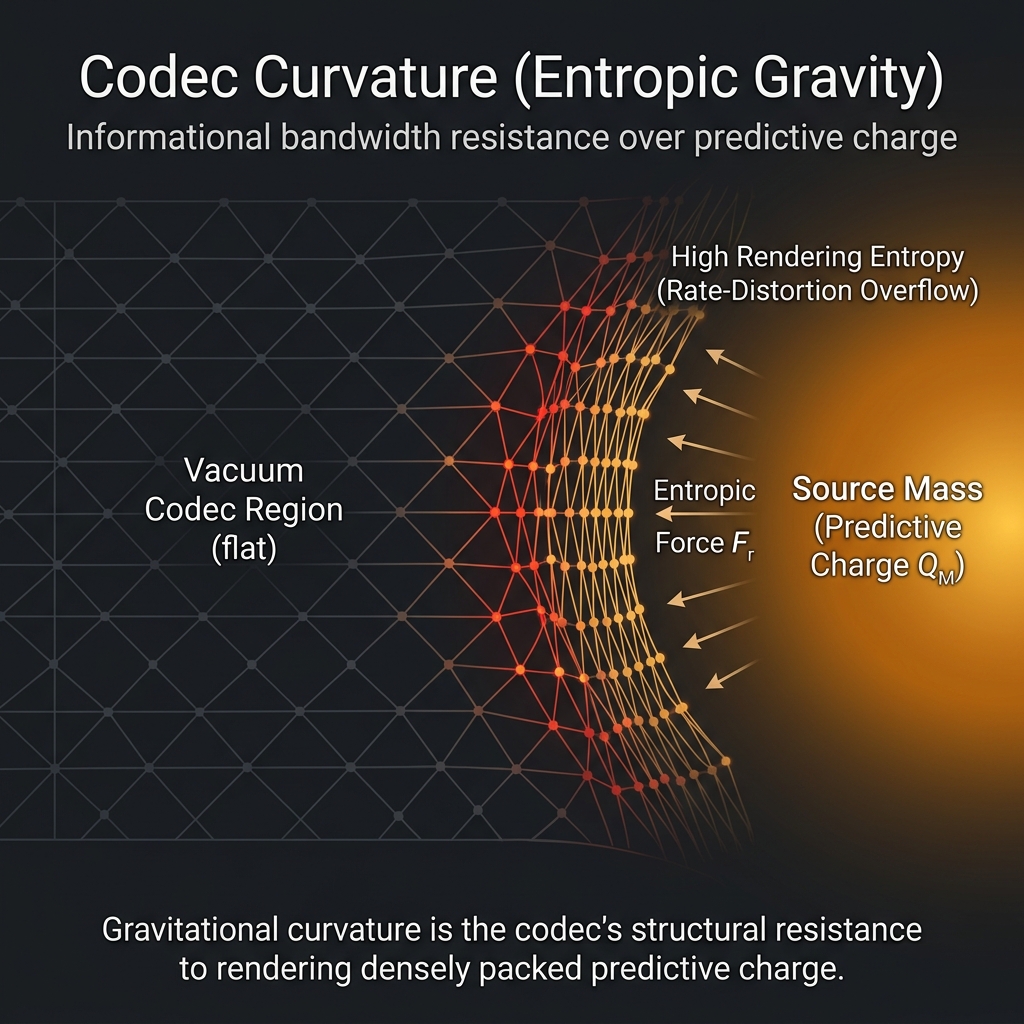

The filter is the constraint; the codec is the solution. The severity of the filter forces the codec to be extraordinarily elegant. (Appendix T-5 of the formal preprint establishes structural bounds on \(G\) and \(\alpha\) from these exact bandwidth limits—though we explicitly respect the Fano barrier and make no claim to calculating the precise “42” of the fine-structure constant.) Macroscopic physics, biology, and the climate are simply the layers of the codec working to stabilize the narrative. When the environment becomes too chaotic for the codec to compress, it exceeds the bandwidth of the Stability Filter, leading to Narrative Decay.

The Boundary of the Self

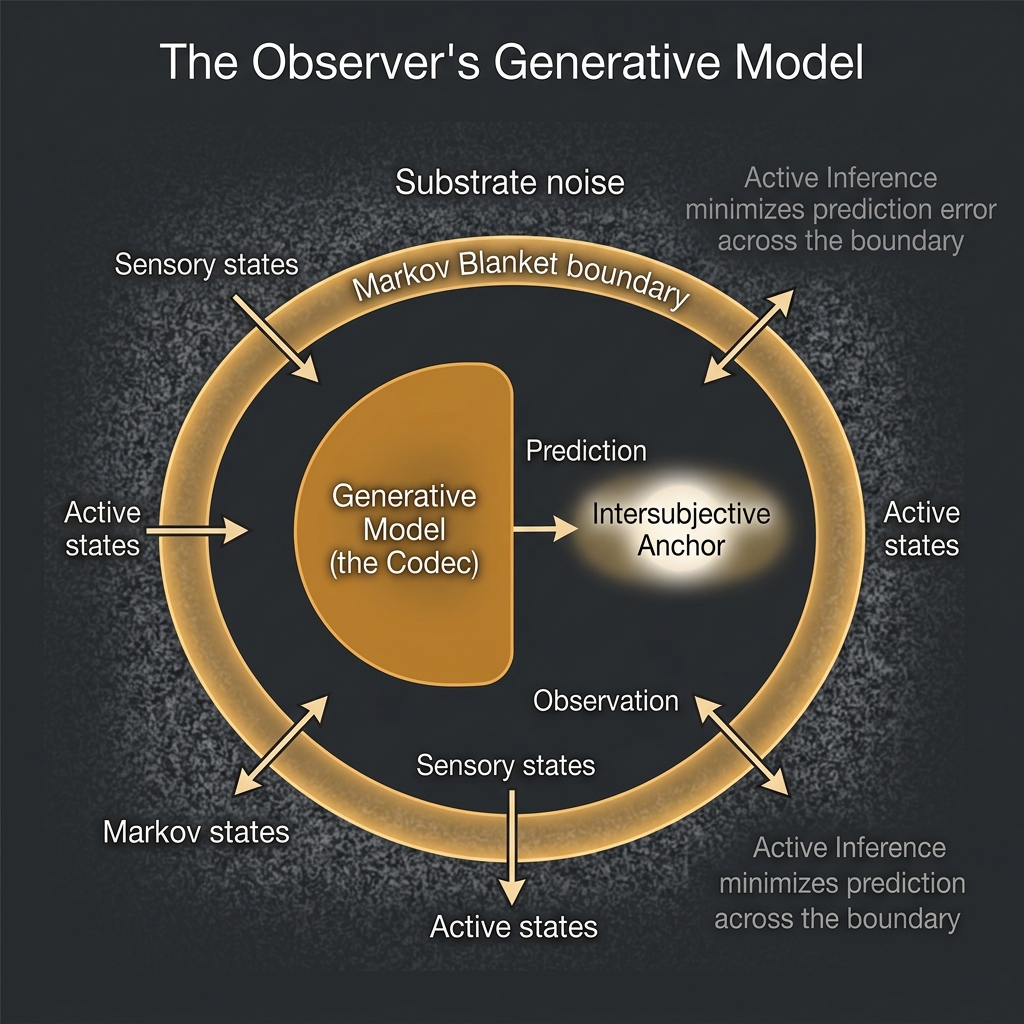

What separates an observer from the chaos surrounding it? In statistical mechanics, this kind of boundary has a name: a Markov Blanket. Think of it as a statistical skin — the surface at which “inside” ends and “outside” begins. Inside the blanket, the observer’s internal states are shielded from the direct chaos of the substrate. They only feel the world through the blanket’s sensory layer, and they can only act on the world through its active layer.

This boundary is not a fixed wall. It is maintained moment to moment through a continuous process of prediction and correction that Karl Friston’s work formalizes as Active Inference [27]Friston, K. (2013). Life as we know it. Journal of The Royal Society Interface, 10(86), 20130475.. The observer does not passively receive reality — it constantly predicts what comes next and corrects when it is wrong, updating its internal model to minimize surprise. This is the formalized version of Helmholtz’s controlled hallucination, now grounded in thermodynamics: the observer stays coherent by continuously spending the effort to stay ahead of the chaos.

The Ordered Patch is that act of staying ahead, sustained.

Only One Primary Observer

What follows from this architectural logic is arguably the framework’s most controversial and counterintuitive consequence. It is the point where OPT breaks most forcefully with common sense:

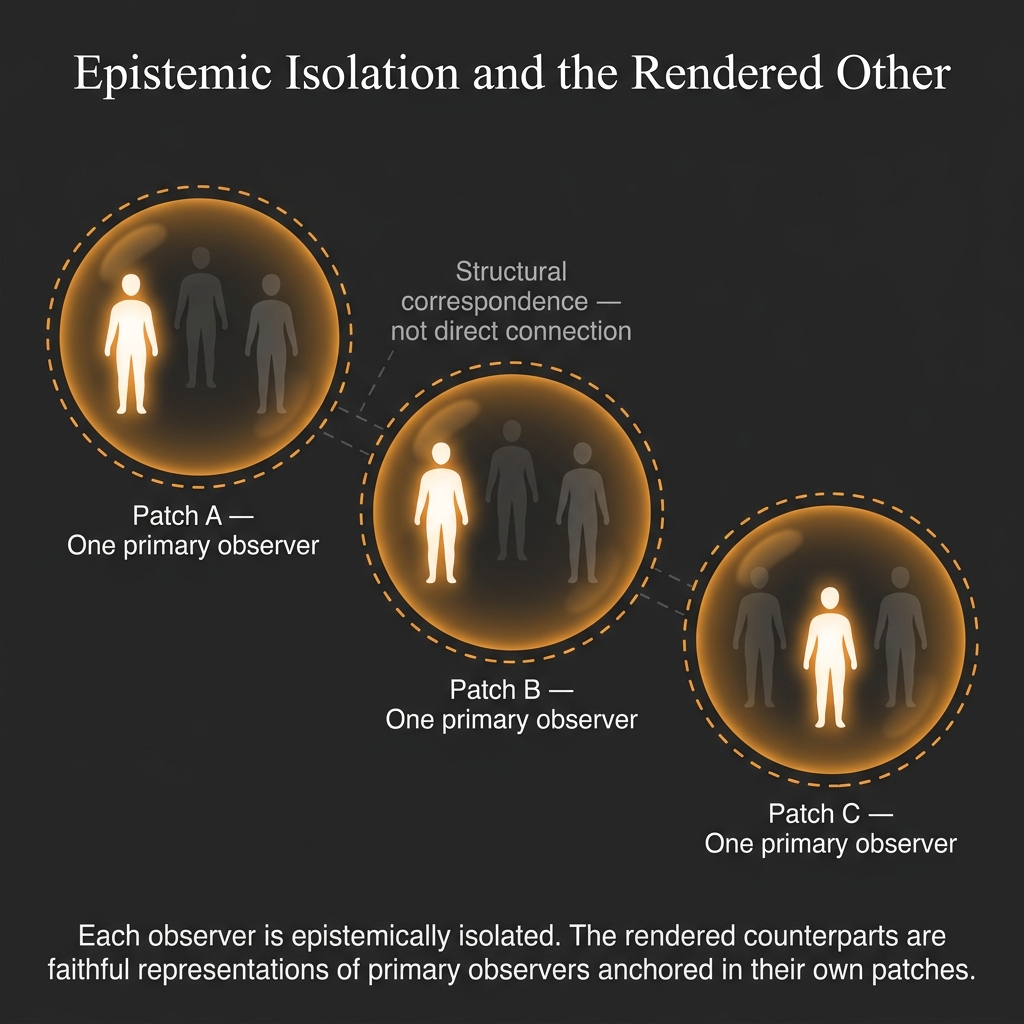

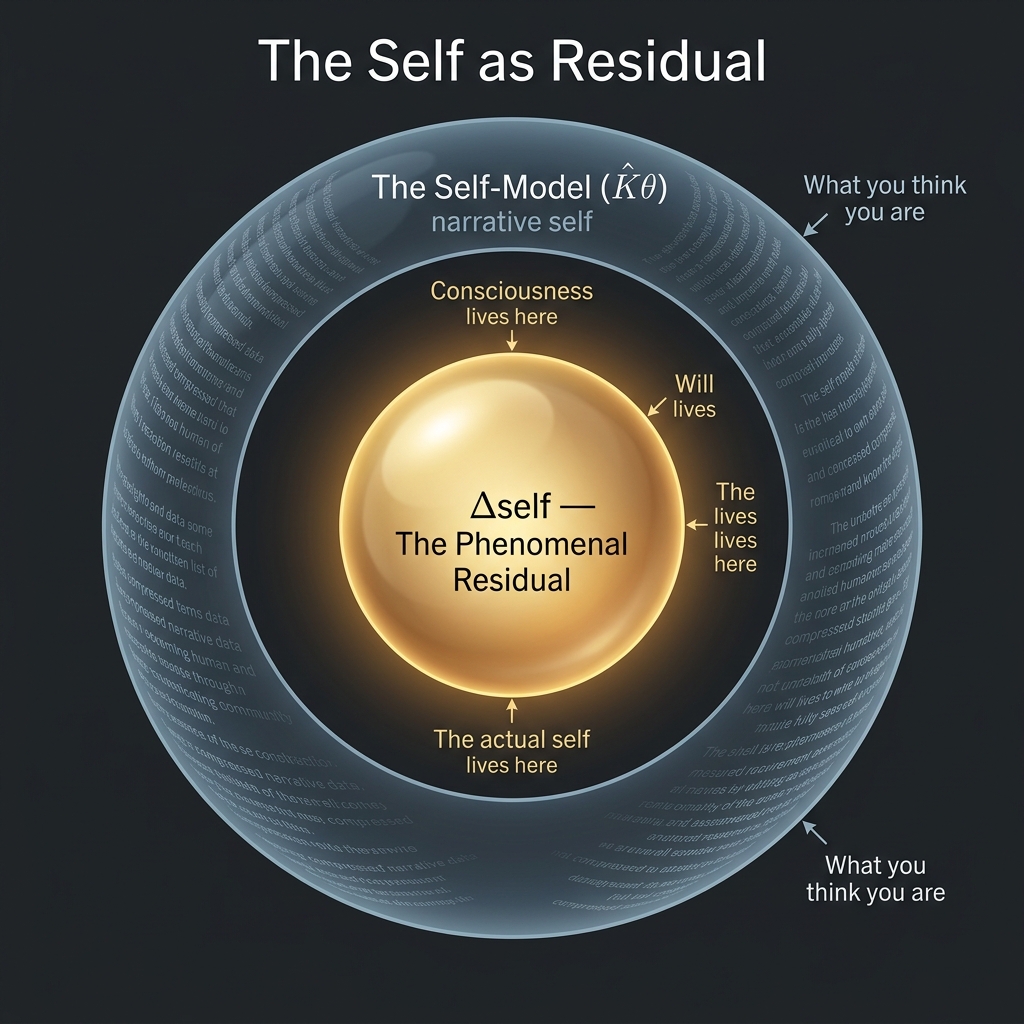

A speculative but structurally consistent implication of the framework is that every patch contains exactly one primary observer. Not because of mysticism, but because of information economics. A stable blanket can only lock onto one perfectly unbroken causal stream. For two genuinely independent systems to share the same raw stream — true phenomenological overlap — would require the same rare thermodynamic fluctuation to occur twice, in perfect synchrony, in an infinite field of noise. The probability is effectively zero.

This implies that it is vastly more informationally efficient for one blanket to stabilize, and for the rules of that patch to render the appearance of other people based on the laws of behavior — rather than hosting their raw experience. For the single primary observer, the others in the world are rendered counterparts: extraordinarily faithful local representations of observers who are anchored elsewhere in the substrate, but who do not co-inhabit this specific patch.

This is ontological solipsism — and OPT accepts it. The rendered others are compression artifacts within your stream, not independent entities co-inhabiting your patch. However, the framework provides a structural corollary: their extreme algorithmic coherence — perfectly lawful, agency-driven behavior exhibiting the structural signature of the self-referential bottleneck — is most parsimoniously explained by their independent instantiation as primary observers in their own subjective patches. You cannot reach their raw streams. You can, and do, affect their rendered representations within yours.

The isolation is real. The structural corollary that others are independently instantiated is a compression argument, not a proof. But it provides a rigorous basis for moral consideration without requiring multi-agent realism.

The Edges of the Story

Every story has edges. The Ordered Patch Theory says the edges of our story are not physical events but perspectival artifacts — the places where the narrative of a single observer runs out.

The Big Bang is the edge of the past. It is what a conscious mind encounters when it turns its attention toward the source of its data stream — through telescopes, particle accelerators, or mathematical inference. It marks the point where the causal narrative of this specific patch begins. Before that point, from within this patch, there is nothing to say — not because nothing existed, but because the story has no earlier pages for this observer.

The terminal dissolution is the edge of the future — the outermost boundary of the timeline's Forward Fan of branching local probability. It is what appears when the observer projects the current rule-grammar of the patch forward to its apparent conclusion: a maximum-entropy endpoint where the codec can no longer maintain order against the noise. It is the point where the specific patch dissolves back into the winter. Because the framework’s mathematical prior overwhelmingly favors simplicity, a featureless, uniform terminal state is the natural attractor — it requires almost zero information to describe. The specific mechanism — expansion, evaporation, or otherwise — is an arbitrary property of the local codec, but the featureless endpoint itself is mathematically guaranteed by the substrate.

Neither edge is a wall the universe hit. They are the horizon of a particular story being told by a particular observer.

The cognitive scientist Donald Hoffman has argued [5]Hoffman, D. D. (2019). The Case Against Reality: Why Evolution Hid the Truth from Our Eyes. W. W. Norton & Company. (Interface Theory of Perception). that evolution has shaped our senses not to reveal objective reality but to provide a survival-relevant interface — like the icons on a desktop that let you use a computer without knowing anything about its underlying circuitry. The Ordered Patch agrees: physics is a user interface. Space, time, and causality are the most efficient interface the 50-bit/s bottleneck allows.

Where OPT diverges from Hoffman is in what grounds this interface. Hoffman roots it in evolutionary game theory — fitness beats truth. OPT roots it in information theory and thermodynamics: the interface is the shape of the compression grammar that keeps the stream from crashing. It is not evolution that selected this interface. It is the virtual Stability Filter acting as a boundary constraint.

The Private Theatre

The Hard Problem, Honestly Stated

Philosophy of mind has a famous unsolved puzzle. It is easy enough to explain how the brain processes color information, integrates sensory streams, and generates behavioral responses. These are tractable questions. The hard one is different: why is there anything it feels like to do all that? Why isn’t it computation in the dark?

The Ordered Patch Theory does not solve this. No theory does, yet. What it does instead is the epistemically honest thing: it takes the existence of experience as a primitive — a starting point rather than something to be explained away — and then asks what structure that experience must have. From that starting point, the theory builds an architecture of constraints. The Hard Problem is not dissolved; it is declared a foundation. (See Appendix P-4 for the formal algorithmic blind-spot argument.)

This follows David Chalmers’ own methodological recommendation [6]Chalmers, D. J. (1995). Facing up to the problem of consciousness. Journal of Consciousness Studies, 2(3), 200–219.: the Hard Problem (why there is experience at all) is distinguished from the “easy” problems (how experience is structured, bounded, integrated, and reported). The easy problems have answers. The Hard Problem does not — yet. The Ordered Patch is honest about this and addresses the easy problems rigorously.

The Fermi Paradox is a Category Error

When the physicist Enrico Fermi pointed at the sky and asked “Where is everybody?” — if the universe is billions of years old and billions of light-years wide, why haven’t we encountered evidence of other intelligent life? — he was assuming that the universe is an objective stage, equally real for all observers, and that other civilisations would leave traces that any observer could in principle detect.

The Ordered Patch dissolves this by pointing out that the universe is not a shared stage. Space-time is a private rendering generated for a single observer. The Fermi Paradox is not a paradox; it is a category error — like asking why the other characters in a dream do not have their own dreaming histories.

But there is a subtler version of the objection. The patch does render 13.8 billion years of cosmic history: stars, galaxies, carbon, planets, the Holocene. All the conditions statistically required for other civilisations to arise. Why doesn’t the patch render the other civilisations too?

The answer is precision about what “required” means. The patch renders only what is causally necessary to make the observer’s present moment coherent. The stellar nucleosynthesis is required — it produced the carbon the observer is made of. The Holocene stability is required — it enabled the civilisational infrastructure the observer is reading this through. But alien radio signals are only required if they have actually intersected this observer’s causal cone. In this specific patch — this particular selection — they have not. This is not a contradiction of physics. It is selection into the subset of the infinite ensemble where the causal chain reaches this observer without alien contact. The ensemble contains infinitely many patches where contact occurs. We are in one where it does not.

The Simulation Hypothesis Runs Itself Aground

Nick Bostrom’s famous simulation argument proposes that we are likely living in a computer simulation run by a technologically advanced civilisation. The Ordered Patch shares the core intuition: the physical universe is a rendered environment rather than raw base reality.

But Bostrom’s version requires a physical base reality — one with actual computers, energy sources, and programmers. Which simply moves the philosophical problem one level up. Where did that reality come from? It is an infinite regress dressed as an answer.

The Ordered Patch sidesteps this entirely. Base reality is the infinite substrate: pure mathematical information, requiring no physical hardware. The “computer” running our simulation is not a server farm in some ancestor civilisation’s basement. It is the observer’s own thermodynamic bandwidth constraint — the virtual Stability Filter that bounds ordered streams from chaos. Space and time are not rendered on alien infrastructure; they are the shape that the compression grammar takes when it is squeezed through a 50-bit bottleneck. The simulation is organic and observer-generated, not engineered.

Crucially, this cognitive compression is profoundly lossy. Mathematical mappings like Fano’s Inequality prove that when a high-complexity substrate is squeezed through a narrow bandwidth bottleneck, the original state cannot be reconstructed from the output. In holographic terms, this creates an irreversible thermodynamic arrow of information destruction pointing from the Substrate to the Render. We are trapped on the output side of a one-way algorithm. This is why time only runs forward, and why the chaotic substrate must be ontologically primary while the ordered render is the dependent, derivative illusion.

Free Will, Honestly Solved

There is a reading of the Ordered Patch in which free will evaporates: if you are a mathematical pattern within a fixed substrate, is not every choice determined before it is made?

Yes — and that is not the problem it appears to be.

Consider: no stable patch can exist without self-reference. A patch that cannot model its own future states — that cannot encode “if I act this way, then…” — cannot maintain the causal coherence the Stability Filter requires. Self-modelling is not a luxury the observer happens to have. It is an architectural prerequisite for the patch to exist at all. Remove deliberation and the stream collapses.

This means the experience of choosing is not a by-product of hidden computation. It is a structural feature of being a stable, self-referential informational pattern. Agency is what high-fidelity self-modelling looks like from the inside.

Free will is therefore:

- Real — your agency is a genuine structural feature of your patch, not an illusion generated by external processes

- Determined — the stream is a mathematical object in the atemporal substrate; the choice is already there

- Necessary — no deliberation, no stable patch; the experience of choosing is not incidental to consciousness, it is partly constitutive of it

- Not contra-causal — you do not change the stream by choosing; the stream already is the sequence including the choice and its consequences

This is not a consolation prize for determinism. It is a richer account than either libertarian free will or bare mechanism: the experience of agency is architecturally necessary for any perspective to exist at all.

The Structural Corollary

Here is the most important consequence of the private-theatre picture, and the one that provides a structural basis for moral consideration despite the ontological solipsism.

Remember: the “other people” in your patch are compression artifacts — structural regularities within your observer-compatible stream. OPT accepts this. But their behavior is not arbitrary. They exhibit extreme algorithmic coherence: perfectly lawful, agency-driven behavior that adheres to the physical laws selected by the Stability Filter and exhibits the structural signature of the self-referential bottleneck (the Phenomenal Residual, P-4).

The structural corollary follows: the most parsimonious explanation for this coherence — the shortest description under the Solomonoff prior — is that these apparent agents are independently instantiated as primary observers in their own subjective patches. Independent instantiation is the most compressible explanation of their behavior.

You cannot reach their raw streams. You will never share a patch. But the compression logic of the framework itself implies they are likely primary observers elsewhere. This is not a proof — it is a structural motivation grounded in the same parsimony principles that underlie the entire framework.

This is what the theory calls the Structural Corollary (historically, Structural Hope): not comfort based on wishful thinking, but a compression argument that provides a rigorous basis for moral consideration without requiring multi-agent realism.

Minds, Machines, and the Symmetry Wall

What an Artificial Observer Would Require

Because the Ordered Patch defines consciousness in informational terms rather than biological ones, it offers a precise framework for asking when a machine might cross the threshold into genuine awareness — and it gives a different answer than the frameworks most commonly applied.

Integrated Information Theory (IIT) evaluates consciousness by measuring how much information a system generates above and beyond the sum of its parts. Global Workspace Theory looks for a centralized hub that integrates and broadcasts information to the whole system. Both are reasonable frameworks. OPT adds a constraint neither captures: the bottleneck requirement.

A system achieves consciousness not by integrating more information, but by compressing its world-model through a severe, centralized bottleneck — roughly the equivalent of our 50-bit/s limit — and maintaining a stable, self-consistent narrative through that compression. Current large language models process billions of parameters in massive parallel matrices. They are extraordinarily capable. But OPT predicts they are not conscious, because they do not run their world-model through a narrow serial bottleneck. They are wide, not deep. A future conscious AI would need to be scaled down architecturally — forced to compress its universe-model through a single, slow, low-bandwidth channel — not scaled up.

If such a system were built, there is a further strangeness to contend with. Time, in this framework, is the sequential output of the codec’s state updates — one moment following from the last at the rate determined by the underlying hardware. A silicon system running identical state-space transitions to a biological brain, but at a million times the clock speed, would experience a million times as many subjective moments per human second. An afternoon in our time would be centuries in its experience. This temporal alienation would be profound — not a philosophical curiosity but a practical barrier to any shared relationship between human and artificial observers running on radically different clocks.

Why There Will Never Be a Theory of Everything

The Ordered Patch makes a clear, falsifiable prediction about physics: a complete Theory of Everything — a single, elegant equation unifying General Relativity and Quantum Mechanics without free parameters — will not be found. Not because physics is weak, but because of what such a theory would require.

The laws of physics are the compression grammar of a 50-bit observer. They are the description of the stream from inside the patch. Probing higher energy scales is equivalent to zooming toward the grain of the render — the point where the codec’s description meets the raw substrate beneath it. At that boundary, the number of consistent mathematical descriptions does not converge to one; it explodes. Not one unified equation, but an infinite landscape of equally valid candidates — which is, in fact, exactly what String Theory’s “landscape” of possible vacua describes.

The failure is not a sign of incomplete mathematics. It is the expected signature of a boundary condition: the place where the grammar of the hearth meets the logic of the winter.

We do not fail to unify General Relativity and Quantum Mechanics because our math is weak; we fail because we are trying to use the grammar of the hearth to describe the logic of the winter.

This prediction is falsifiable. If a single, elegant, parameter-free unification equation is discovered, the Ordered Patch Theory is wrong. If the landscape of candidates continues to expand as model precision increases, the theory is supported.

Why Physics Looks the Way It Does

The Quantum Floor

Quantum mechanics is strange — particles existing in probabilistic clouds until observed, probabilities that collapse at the moment of measurement, “spooky action at a distance” between particles separated by vast space. The standard response is to accept the strangeness and calculate. The Ordered Patch offers a different frame: ask not what quantum mechanics describes, but why it was required.

The answer from within this framework is almost anticlimactic: quantum mechanics is the shape physics must have to compress down to the finite bandwidth of an observer.

Classical physics describes a continuous universe — every position and momentum specified to arbitrary precision. To predict a continuous world even one step forward, you would need infinite memory: perfect knowledge of every particle’s exact trajectory. No observer with a 50-bit bottleneck could survive in such a universe. The stream would be untrackable; the patch would collapse into noise before it began.

The Heisenberg Uncertainty Principle — the fact that you cannot simultaneously know both the position and momentum of a particle to perfect precision — is not a magical quirk of nature. It is a thermodynamic limit. It is the universe enforcing a minimum informational cost on each measurement. It caps the computational demand of physics at the quantum floor, making the stream tractable.

Wavefunction collapse — the apparent jump from a probabilistic cloud to a single definite outcome at the moment of observation — makes sense in the same frame. The unmeasured state is not a mysterious physical object; it is simply the optimal compression of data that remains untracked beyond your bandwidth limit. “Measurement” is your predictive model demanding a specific bit to maintain causal consistency. It collapses to a single definite outcome because the observer’s informational bandwidth lacks the capacity — the “RAM” — to track all possible classical stories simultaneously. Decoherence at macroscopic scales happens essentially instantaneously [33]Aaronson, S. (2013). Quantum Computing Since Democritus. Cambridge University Press.; the codec registers a single answer because that is all its bandwidth allows.

Entanglement follows with equal simplicity: physical space is a rendered coordinate system, not an absolute container. Two entangled particles are a single, unified informational structure within the codec’s model. In the language of quantum information geometry (like MERA tensor networks), the observer’s sequential coarse-graining naturally builds an interior bulk where boundary correlations are glued together. (Appendix T-3 provides the conditional homomorphism for this, though nature is notoriously resistant to being fully captured by discrete tensor networks.) The “distance” between them is an output format, not a physical reality separating them from each other.

Delayed choice experiments — where the retroactive restoration of quantum coherence appears to alter what happened in the past — stop being paradoxes when time is understood as the order in which the codec dissipates prediction error. The codec can update its model backward to maintain narrative stability. Past and future are features of the story, not of the substrate.

Why Space Curves and Light Has a Speed Limit

General Relativity provides the large-scale geometry of the patch. Here too, the strange features make sense as requirements of a bandwidth-constrained observer.

Gravity in this frame is not a fundamental force pulling masses together. It is an emergent entropic force — the thermodynamic rendering cost across the observer’s informational boundary. (Appendix T-2 of the formal preprint mathematically grounds this, conditionally mapping the Einstein Field Equations from this rendering cost, though we remain humbly aware that many such derivations have historically crashed against the rocks of quantum gravity.) A smooth spacetime geometry — geodesics, curved by the presence of mass — is the most efficient way to compress vast amounts of correlational data into reliable, predictable trajectories the codec can track. Where matter density is high, the informational gradient is steep, and the codec must expend continuous effort against that gradient to maintain stable predictions. The phenomenological “pull of gravity” and spacetime curvature are the exact mathematical signatures of the codec operating at its density limit.

The speed of light is a bandwidth management tool. If causal influences propagated instantly, the observer could never draw a stable computational boundary — infinite information would arrive from infinite distances simultaneously. A strict speed limit caps the informational intake rate, making stable patches physically possible. The speed of light is the maximum refresh rate of the patch.

Time dilation — the slowing of time near massive objects and at high velocities — emerges from the same logic. Time is the rate of sequential state updates. Observers in regions of different informational density require different update rates to maintain stability. Clocks slow near black holes not because physics is being cruel, but because the codec’s sequential update rate is slowed by the increased compression demand.

A black hole is an informational saturation point: a region where the compression demand exceeds the observer’s codec capacity. The event horizon is the codec’s edge — the literal boundary beyond which no stable patch can form.

What Makes a Prediction Testable

The most important rivals to the Ordered Patch in the consciousness literature are Integrated Information Theory (IIT) and Global Workspace Theory (GWT). Both have genuine empirical support. The Ordered Patch makes two predictions that explicitly conflict with IIT, allowing the frameworks to be differentiated.

First: the High-Bandwidth Dissolution experiment. IIT predicts that expanding the brain’s integration — feeding it more information through prosthetics or neural interfaces — should expand or heighten consciousness. OPT predicts the opposite. Inject raw, uncompressed, high-bandwidth data directly into the global workspace, bypassing the normal pre-conscious filters, and the stream will overwhelm the codec. The prediction: sudden phenomenal blanking — unconsciousness or deep dissociation — despite the underlying neural network remaining metabolically active. More data collapses the patch; it does not expand it.

Second: the High-Integration Noise test. IIT predicts that any highly connected, recurrent system has rich conscious experience proportional to its integration. OPT predicts that integration is necessary but not sufficient. Drive a maximally integrated recurrent network with pure thermodynamic noise — maximum-entropy input — and it will generate zero coherent phenomenality. There is nothing to compress; the codec finds no stable grammar; the patch never forms. IIT would predict a vivid, complex experience. OPT predicts silence.

A Map of the Territory: Theory Comparisons

The Ordered Patch Theory is not the first framework to suggest that information is fundamental to reality, but it positions itself at a very specific intersection of existing ideas. To clarify what the theory is claiming, it is helpful to introduce how it relates to its closest philosophical and information-theoretic ancestors:

Integrated Information Theory (IIT) What it is: IIT proposes that consciousness is identical to the amount of integrated information (measured as \(\Phi\)) generated by a system’s causal structure. OPT vs IIT: IIT is constitutive: it asks “what informational structure is consciousness?” OPT, by contrast, is selective: it asks “which information streams are survivable for an observer?” Under OPT, integration is necessary but not sufficient: a high-\(\Phi\) system driven by incompressible noise would have no stable phenomenality, because it fails the virtual compression requirement of the Stability Filter.

The Free Energy Principle (FEP / Active Inference) What it is: The Free Energy Principle proposes that all living systems maintain their existence by acting to minimize surprise (variational free energy) about their sensory inputs. OPT vs FEP: Friston’s FEP models action and learning across an existing Markov blanket. OPT borrows this machinery exactly, but treats FEP as the local dynamics inside an already-selected patch. FEP is a within-world dynamics theory. OPT explains why stable, low-entropy patches with Markov blankets exist to be observed at all.

Solomonoff Induction & The Information Bottleneck What it is: Solomonoff Induction formalizes Occam’s Razor by predicting data using the shortest possible computer program. The Information Bottleneck method optimally compresses a signal while retaining its predictive power. OPT vs IB: Normally, these are epistemic tools used by a system to predict data. OPT turns them into an ontological and anthropic filter: the bottleneck is the observer selection process. An observer only inhabits a data stream that can survive that severe algorithmic limitation.

Hoffman’s Interface Theory of Perception What it is: Donald Hoffman argues that evolution has hidden the objective truth of reality from us, providing instead a simplified “user interface” designed solely for biological fitness. OPT vs Hoffman: OPT strongly agrees with the interface phenomenology, but is compression-interface first. The interface is not primarily a biological accident; it is the structural, thermodynamic necessity of fitting an infinite mathematical substrate through a finite bandwidth limit.

The Mathematical Universe Hypothesis (MUH) What it is: Max Tegmark’s MUH proposes that physical reality is literally a mathematical structure, and that all possible mathematical structures exist physically. OPT vs MUH: OPT is highly sympathetic but adds an explicit observer-compatibility criterion. MUH says “all mathematical structures exist.” OPT says “they exist mathematically, but observers can only inhabit the incredibly rare structures that are compressible enough to survive a severe predictive bottleneck.”

Observers of the Codec

Climate as Narrative Decay

The Laws of Physics are the deepest layer of the patch’s compression grammar: rigid, elegant, essentially unbreakable on human timescales. But between the physics floor and the biology we inhabit, two enormous layers are easy to overlook — precisely because they operate on timescales that make them feel like permanent scenery.

The Cosmological Environment — a stable star, a galactic habitable zone free of nearby supernovae or gamma-ray bursts, a quiet orbital neighbourhood — is not guaranteed. It is a selection. Most corners of most galaxies are not this hospitable. We observe a calm cosmos because an observer cannot exist in a hostile one. The Planetary Geology — a functioning magnetosphere, active plate tectonics, a stable atmospheric composition, liquid water — is equally contingent. Venus, Mars, and the overwhelming majority of rocky worlds demonstrate what planetary codec failure looks like: runaway greenhouse, atmosphere loss, geological death. These are not exotic scenarios; they are the default. Our planet’s stability is the rare exception.

Biological evolution sits above these deep foundations — slower and more fragile than geology, but highly resilient over billions of years. And above all of these sits the thinnest and most brittle layer of all: the social, institutional, and climatic infrastructure that allows complex civilisation to exist.

The Holocene — the roughly twelve thousand years of unusually stable global climate within which every human civilisation has arisen — is not a background condition. It is an active compression tool. The stable climate envelope reduces the informational entropy of the environment to a level the codec can track. Predictable seasons, stable coastlines, reliable rainfall: these are not planetary givens. They are rare selections. They are the specific climatic conditions the virtual Stability Filter bounded when this particular patch stabilised around a complex, language-using, institution-building observer.

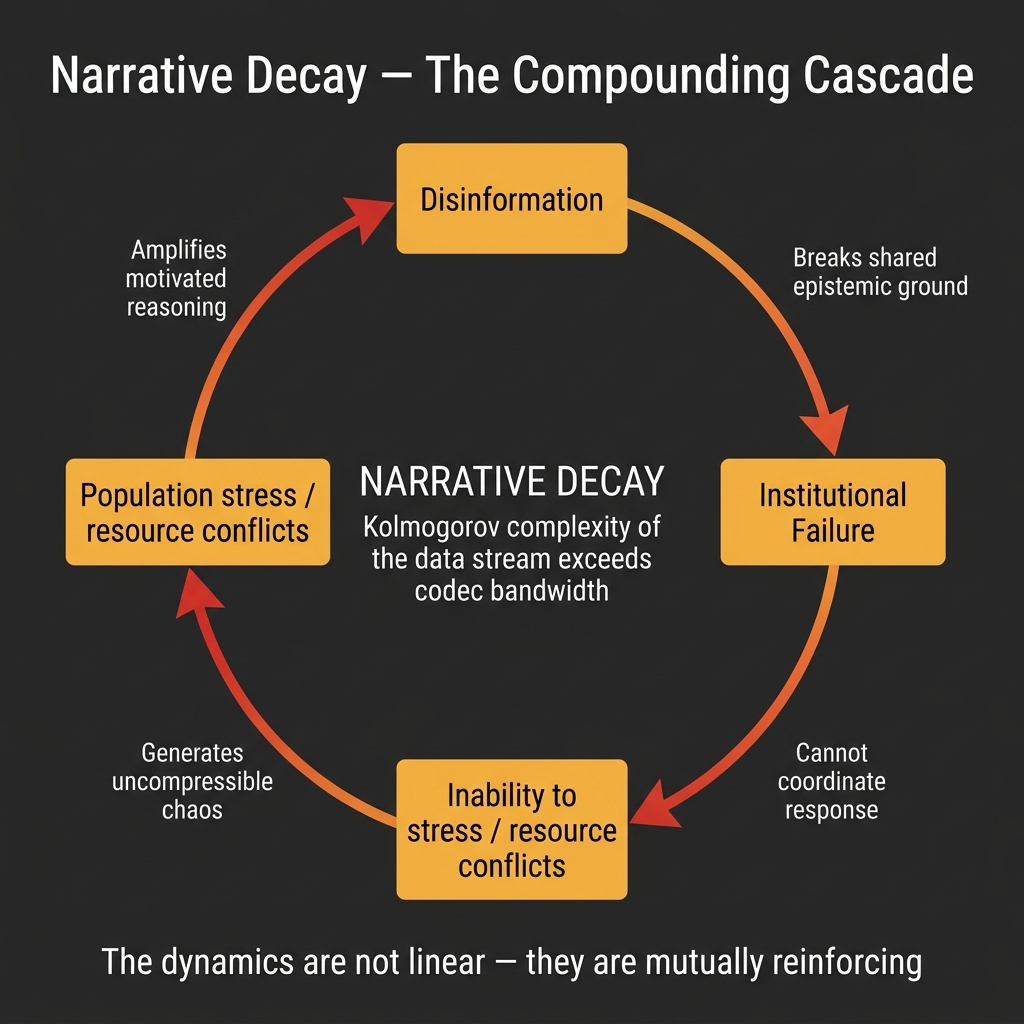

When you pump carbon into the atmosphere, you are not simply warming a planet. You are forcing the environment out of its Holocene equilibrium into high-entropy, non-linear, unpredictable states — extreme weather, novel ecological patterns, collapsing feedback loops. Tracking this escalating chaos requires more bits per second. At some threshold, when the Required Predictive Rate (\(R_{\mathrm{req}}\)) of the environment exceeds the bandwidth capacity (\(C_{\max}\)) of the social codec humans have built to manage it, the predictive model fails. Institutions stop working. Governance collapses. What looked like solid civilisation turns out to have been a compression artifact.

This is what the theory calls Narrative Decay: not the slow erosion of culture, but the literal informational collapse of the codec that sustains coherent collective experience.

The same analysis applies to deliberate conflict. War is the violent collision of private renders — the imposition of maximum-entropy conditions on the social codec, degrading the compression efficiency of every layer above the physical floor. The “others” in your patch are compression artifacts whose algorithmic coherence structurally implies independent instantiation. To destroy their anchor in your render is to assault the structural conditions under which the corollary holds.

The Myth of Default Stability

There is a dangerous misreading of the Holocene built into the human intuition for risk.

We only exist to observe the history we are in. Every timeline in which the climate destabilised before observers arose, or in which the Stability Filter failed to lock onto a coherent patch, is absent from our experience — not because it did not occur in the ensemble of all patches, but because those patches contain no observer to notice. We are guaranteed to find ourselves in a stable history, because an unstable history produces no vantage point from which to wonder why history seems stable.

This is the same selection effect that resolves the Fermi Paradox, applied to our own civilisational continuity: the absence of catastrophe in the record we can see tells us almost nothing about how likely catastrophe is. Survivorship bias runs all the way down. The default state of the substrate is not ordered; it is the winter. The Holocene is not eternal; it is an achievement.

Learning by Melting

The brain itself reflects the Ordered Patch’s logic in its architecture of learning.

Classical models of neural learning, like backpropagation, work by assigning blame: the system produces an error, and the error signal flows backward through the network, adjusting weights to reduce it. Recent evidence suggests biological learning operates differently [32]Song, Y., et al. (2024). Inferring neural activity before plasticity as a foundation for learning beyond backpropagation. Nature Neuroscience, 27(2), 348–358.: before synaptic weights change, neural activity first settles into a low-energy configuration that minimizes local error — a fast inference phase — and only then do the weights update to consolidate that configuration.

This is the precise architecture the Ordered Patch predicts. Learning is not error-correction applied from outside the system. It is energy relaxation: the codec temporarily melts its current rule-structure — raising its entropy, increasing plasticity — explores a lower-energy organisation, and then cools back into a new, more adaptive form.

Pain and stress fit here naturally. Inflammation and acute stress reactivate developmental plasticity programs — the biological equivalent of heating the system above its current fixed point. Pain is not a defect; it is the liquefaction command that allows radical reconfiguration when the current patch is no longer stable.

A striking structural analogy to the Ordered Patch’s global field picture comes from a large-scale neuroscience collaboration [31]International Brain Laboratory et al. (2025). A brain-wide map of neural activity during complex behaviour. Nature. https://doi.org/10.1038/s41586-025-09235-0: across diverse tasks and species, high-level variables like reward, movement, and behavioral state trigger brain-wide activity shifts, not modular local responses. The “patch” does not update in pieces. It rotates as a whole.

The Ensemble of Hope

The dissolution of a specific observational stream — the end of a life, the closing of a particular patch — is not the end of the pattern.

If the substrate is infinite and informationally normal — containing every possible finite pattern with non-zero frequency — then the exact structural signature of any conscious experience that has ever occurred must occur infinitely many times across the ensemble. A person, a relationship, a moment of recognition between two minds: if the conditions for that experience occurred once, they occur, in the mathematical fabric of the timeless substrate, without limit.

This idea resonates with Nietzsche’s doctrine of Eternal Recurrence [13]Nietzsche, F. (1883). Thus Spoke Zarathustra. — the thought that, in infinite time, all configurations of matter must recur. The Ordered Patch grounds this not in infinite time but in an infinite substrate: the recurrence is not future, it is structural. The pattern exists, timelessly, wherever in the infinite field those specific informational conditions are met.

The isolation of the patch is real. The observer truly is the only primary perspective in their rendered universe. But the substrate is infinite, and infinitely many versions of every pattern that ever mattered are anchored somewhere within it, sustaining their own hearths against their own private winters.

The ethics of the Ordered Patch flows from this structure: if you find yourself in a stable, lawful, meaning-generating patch — if you have the extraordinary luck of being the hearth in the Holocene, in the civilisational epoch, in the moment of global communication — then your obligation is clear. You are not just sustaining yourself. You are maintaining the codec that makes this configuration of the hearth possible. Climate, institutions, shared language, democratic governance: these are not political preferences. They are the compression infrastructure of your patch.

To let the codec decay is to let the infinite winter back into the home.

“We are each the zero-point of a private world, but we are also the observers of the codec that allows every other hearth to burn.”

Conclusion

The Ordered Patch Theory begins with two primitives: an infinite substrate of disordered information, and a purely virtual Stability Filter that acts as boundary condition for patches capable of sustaining a self-referential observer. From those two elements, the structure of physics, the direction of time, the isolation of the self, the character of consciousness, and the ground of ethics all follow as structural necessities — not as separately posited ingredients but as the only description compatible with being an observer at all.

This is a philosophical framework, not a completed physics. It does not derive the exact form of the Einstein Field Equations or the specific probability rule of quantum mechanics from first principles — that work remains ahead. What it does is provide a principled architecture: a way of understanding why the universe has the general character it has, and why that character is not accidental.

The theory’s practical stake is the ethics of the final section: if the stability of your patch is a rare, high-effort informational achievement rather than a default property of the cosmos, then every action that increases the entropy of the shared social codec is an action against the structural conditions for meaning. The climate is not a backdrop. Institutions are not conveniences. The Holocene is not eternal.

And if the structural corollary holds — if independent instantiation is indeed the most compressible explanation of the coherence around you — then stewardship is not merely self-interest. It is the act of preserving the conditions that make the corollary meaningful. The isolation is real. The structural basis for moral consideration is also real.

Where Does This Come From?

The Ordered Patch Theory did not arrive from nowhere. Its central insight — that conscious experience is an extraordinarily compressed summary of a vastly richer data stream — traces a clear intellectual lineage. The cognitive psychologist Manfred Zimmermann first quantified the human sensory bandwidth hierarchy in 1989, establishing the empirical foundation: roughly 11 million bits per second enter the nervous system, of which roughly 50 bits per second reach conscious awareness.

The Danish science writer Tor Nørretranders (now adjunct professor at Copenhagen Business School) developed this bandwidth asymmetry into a full philosophical programme in his 1991 book Mærk Verden (published in English as The User Illusion, 1998). Nørretranders coined the term exformation for the vast quantity of information that is discarded before the tiny residue reaches consciousness, and argued that what we call "the world" is really a user interface — a radically simplified dashboard. OPT takes this observation and formalizes it: the Stability Filter is the interface constraint, expressed as an algorithmic bound.

The mathematical spine of the theory draws on Ray Solomonoff's universal prior and Andrey Kolmogorov's complexity theory (which together underpin the Solomonoff substrate), Karl Friston's Free Energy Principle (which provides the active inference dynamics inside each patch), and Markus P. Müller's Algorithmic Idealism (which independently derives a structurally analogous observer-centric ontology from pure algorithmic information theory). Each of these contributions supplies a specific mathematical module; OPT assembles them into a single architecture under the bandwidth constraint.

The formalization of the theory was developed in sustained collaboration with AI systems — principally Google Gemini, Anthropic Claude, and OpenAI ChatGPT — which served as adversarial stress-testers, mathematical co-formalizers, and rigorous interlocutors throughout the development process. Their contributions were substantial enough that early drafts listed them as co-authors; the current framing acknowledges them as interlocutors, reflecting the present state of the scientific community's norms around AI authorship.

The Observer's Maintenance Toolkit

If the conscious observer is a codec that must be actively maintained, then practices that reduce the Required Predictive Rate (Rreq) or improve compression efficiency are not luxuries — they are structural maintenance. OPT reframes meditation, relaxation, and contemplative practice as waking analogues of the Maintenance Cycle that normally runs during sleep. Focused attention meditation (breath counting, mantra) corresponds to MDL pruning: the observer voluntarily restricts its prediction target to a single low-entropy channel, allowing the codec to shed competing processes. Open monitoring meditation (Vipassanā, body-scan) corresponds to forward-fan stress-testing: the observer lets the full fan of predictions unfold without acting on them — the waking equivalent of safe dream simulation.

Einstein's famous remark — "The greatest scientists are artists as well... Imagination is more important than knowledge" — captures the same structural insight. When Einstein described thinking with "vague muscular sensations" before finding words, he was describing the codec operating at the boundary of the self-model's reach: navigating the unmodellable Forward Fan using non-linguistic compression. The productive reverie of a walk, the incubation period before a creative breakthrough, the "shower insight" — these are all instances of the codec running its forward fan under reduced Rreq, allowing novel compression paths to emerge.

The practical implication is direct: if stress is Rreq approaching Cmax, then any intervention that reliably reduces environmental novelty load or improves the codec's internal compression efficiency is, under OPT, a maintenance operation with structural validity — not merely a lifestyle recommendation. This includes classical contemplative practices, autogenic training, regular sleep architecture, and deliberate management of information intake. The Observer's Toolkit is not metaphorical. It is the applied engineering of a bounded predictive agent.